Smart glasses are wearable computers built into eyeglass frames. They include a camera, microphone, speaker and wireless connectivity. More advanced models add AI assistants and display overlays that show information directly in your field of view.

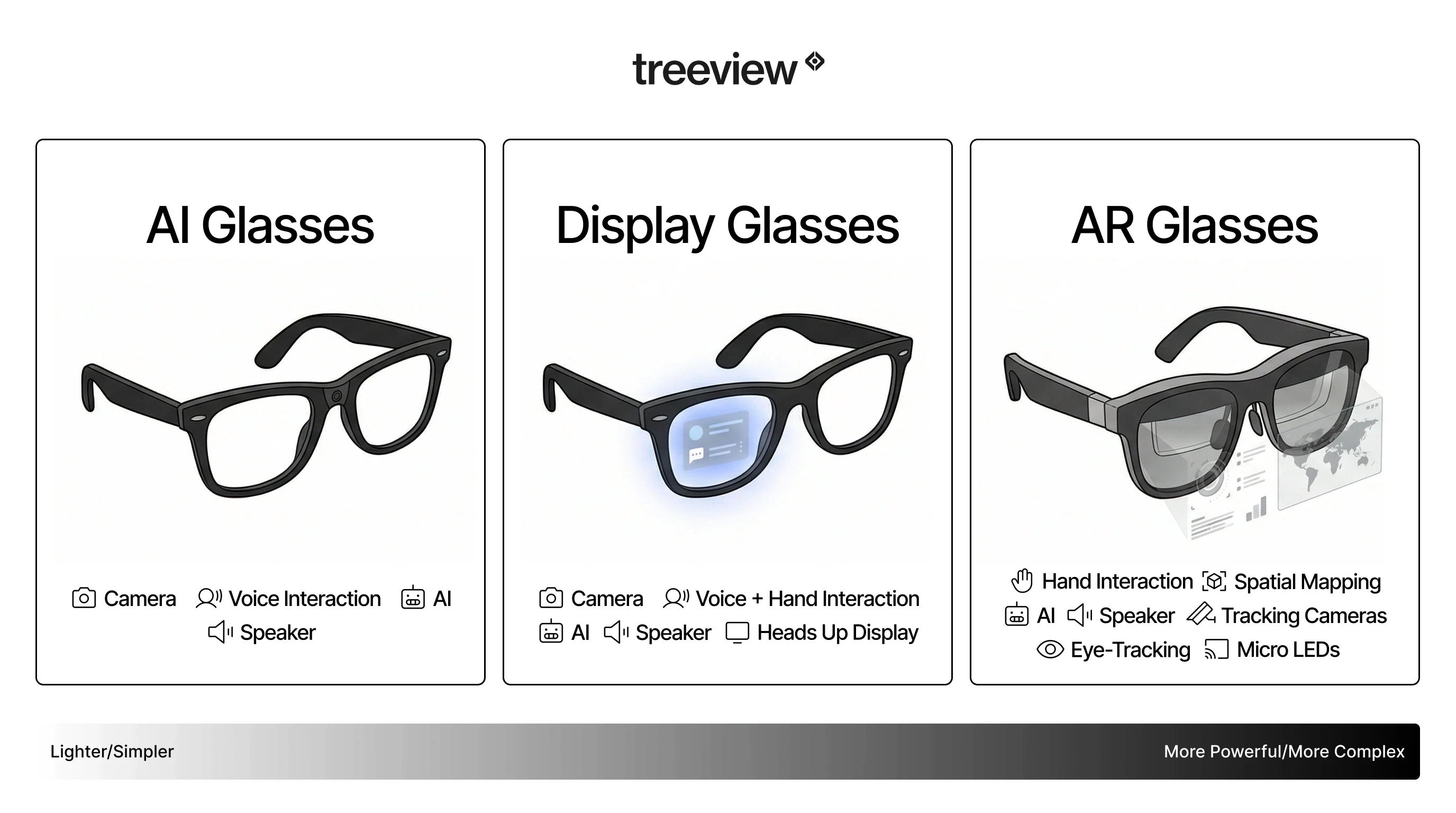

The category covers three distinct device types. AI glasses like the Ray-Ban Meta Gen 2 have no display: they work through voice and a camera-connected AI assistant. AR glasses like the XREAL One Pro add a micro-display that overlays digital content on the real world. Display glasses like the Meta Ray-Ban Display sit between the two, offering a color screen without full spatial AR.

In 2026, the leading device is the Ray-Ban Meta Gen 2 at $379. Meta holds over 90% of the smart glasses market, with over 9 million lifetime units sold.

Feel free to read along or jump to the section that interests you most:

TL;DR

What are Smart Glasses?: Wearable computers built into eyeglass frames with cameras, AI assistants and optional displays. The category splits into AI glasses (voice and camera, no screen), display glasses (color HUD overlay) and AR glasses (full spatial overlay).

Current Uses: AI queries, calls, capture, navigation and entertainment. For enterprise: remote expert assistance, guided procedural workflows, inventory management and hands-free data access, all in active production deployment on current hardware.

Key Challenges: Phone dependency for cloud AI, narrow display fields of view (most under 50 degrees), battery life limitations especially on display devices and public privacy concerns around always-on cameras.

Opportunities: Custom enterprise applications with the Meta Wearables Device Access Toolkit, XREAL SDK and Snap Lens Studio. The Snap Spectacles consumer launch and Google's confirmed Android XR smart glasses (audio glasses fall 2026, display glasses later) will open new platforms in 2026 and 2027, with Apple devices to follow.

Where It's Going: MicroLED displays, edge cloud rendering and contextual AI are the three prerequisites for smart glasses becoming the primary computing device. Most analysts place full convergence in the 2030 to 2035 window.

What Are Smart Glasses?

Smart glasses are wearable computers presented as eyewear. They carry a camera, microphone, speaker and wireless connectivity inside a frame that looks and feels like a regular pair of glasses. More advanced models add a display that projects information directly into the wearer's line of sight, layering digital context over the physical world without replacing it.

What separates smart glasses from a Bluetooth headset or a pair of earbuds is the camera. The camera creates a shared visual context between the wearer and the AI: you can point at something and ask what it is, request a translation of a sign, identify a plant or get step-by-step instructions for the machine in front of you. That camera-plus-AI loop is the core utility of the current generation, and it is what makes smart glasses a meaningfully different product category rather than a head-worn phone accessory.

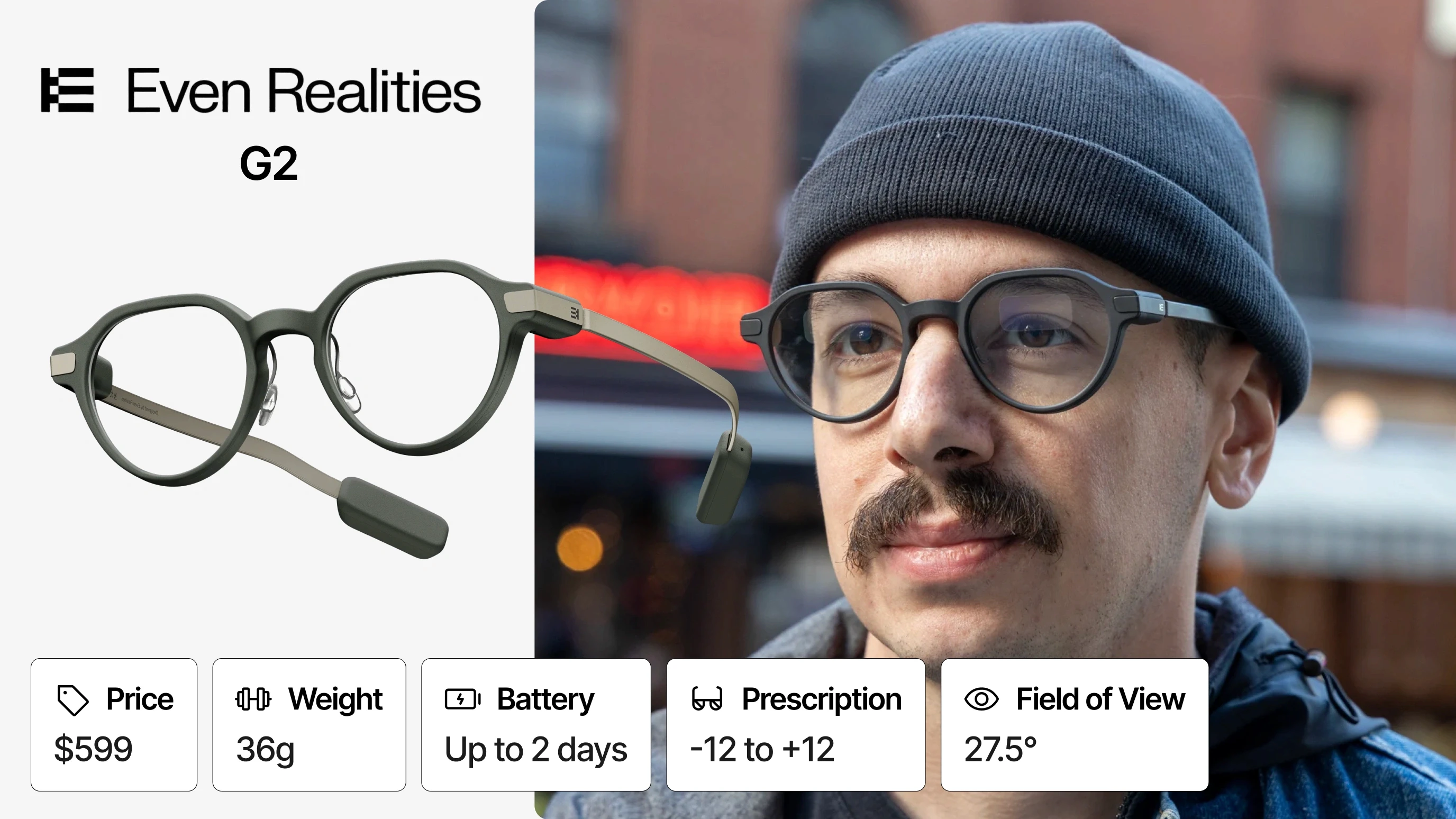

Smart glasses are also distinct from Mixed Reality headsets. Devices like the Microsoft HoloLens 2, Apple Vision Pro or Meta Quest 3 are spatial computing platforms designed for advanced Extended Reality (XR) workflows: they are powerful, heavy and not built for all-day wear. Smart glasses prioritize the opposite. The Ray-Ban Meta Gen 2 weighs 51 grams and looks like a normal pair of sunglasses. The Even Realities G2 weighs 36 grams. The design constraint that governs the entire category is: will someone actually wear this all day in public?

That constraint explains every trade-off in the current hardware. Displays are kept small to save weight and battery. AI inference runs in the cloud rather than on-device to keep the chip small. Cameras are fixed-angle rather than motorized.

The term smart glasses is often used interchangeably with AI glasses and AR glasses, but these describe different hardware configurations.

Types of Smart Glasses

The category has fragmented into three distinct hardware paradigms, each with a different interaction model, target user and development ecosystem:

AI Glasses like the Ray-Ban Meta have no screen, just a camera, open-ear speakers and a voice-activated AI assistant.

AR (Augmented Reality) Glasses like the XREAL One Pro add a micro-display that overlays digital information on the real world.

Display Glasses like the Meta Ray-Ban Display sit between the two, offering a color screen for notifications and AI responses without full spatial AR.

The further along the spectrum you go, the heavier, pricier and more powerful the device gets.

AI Glasses (Camera + Voice, No Display)

The current mass-market standard. These frames look identical to regular glasses and carry a camera, microphone, open-ear speakers and an on-device or cloud-connected AI assistant. Interaction is voice-first.

Primary use cases: photo and video capture, voice calls, real-time AI queries, navigation audio and music playback.

Representative devices: Ray-Ban Meta Gen 2, Ray-Ban Meta (Gen 1), Rokid AI Glasses Style, Xiaomi AI Glasses, HTC VIVE Eagle.

Display Glasses (Color HUD, Partial Screen)

A newer middle category sitting between AI glasses and full AR. These frames add a small color display, visible only to the wearer, for notifications, AI responses, navigation and messaging, without committing to full spatial AR. The display disappears when not in use.

Primary use cases: hands-free messaging, turn-by-turn navigation, captions, AI visual responses, media controls.

Representative devices: Meta Ray-Ban Display, Brilliant Labs Halo, Everysight Maverick Gen 2, Mira.

AR Glasses (Display-Enabled, Overlay)

These frames include a micro-display (waveguide, micro-LED or micro-OLED) that projects digital information into the wearer's line of sight. The physical world remains fully visible, with text, graphics or interface elements overlaid on top.

The display is what separates AR glasses from AI glasses and brings them closer in capability to enterprise AR headsets, though in a dramatically lighter form factor. Current generation AR glasses typically weigh between 30g and 80g, compared to 400g to 600g for headsets.

Representative devices: Even Realities G2, Even Realities G1, Rokid Glasses, Halliday Glasses, INMO GO 3, Lenovo Vision AI Glasses V1.

AR Display Glasses (Tethered Virtual Screen)

A separate and growing subcategory designed primarily for portable cinema, gaming and productivity. These connect via USB-C to a phone, laptop or gaming console and project a large virtual screen, typically 100 to 300 inches equivalent, rather than overlaying information on the real world. Most have no battery of their own.

Primary use cases: portable private display for gaming, travel entertainment, remote work and multi-monitor productivity.

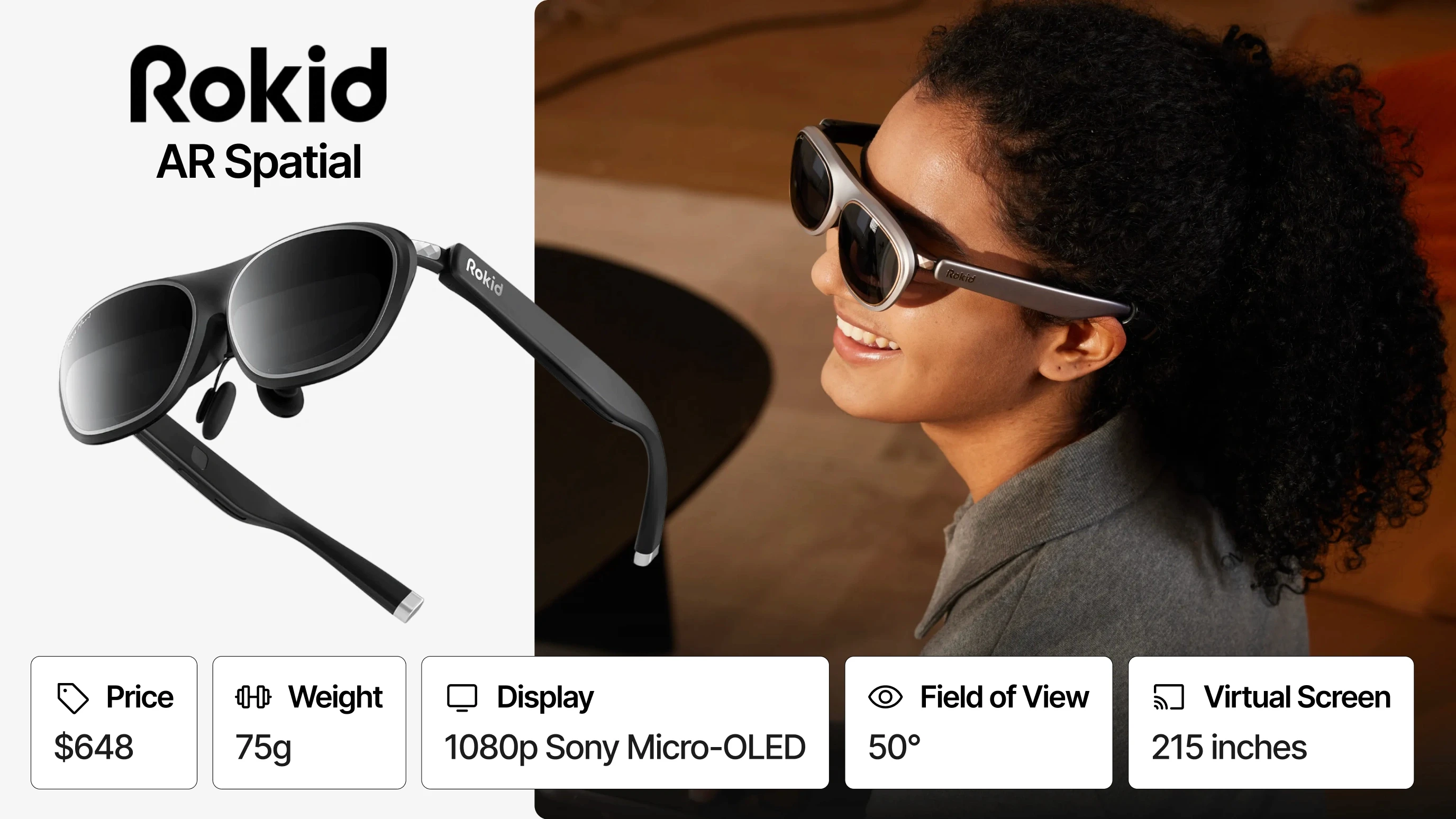

Representative devices: XREAL One Pro, XREAL One, RayNeo Air 4 Pro, VITURE Luma Ultra, Rokid AR Spatial, RayNeo Air 3S Pro, Lenovo Legion Glasses Gen 2, ASUS ROG XREAL R1.

Smart Sunglasses and Lifestyle Frames

Consumer devices that add audio, basic sensors and connectivity to fashion-forward frames. Functionality is limited relative to AI or AR glasses, but they serve the largest addressable market. Oakley Meta HSTN sits here for sport use; Bose Frames and Reebok Glasses by Lucyd serve a similar audio-first lifestyle segment.

Smart Glasses vs. AR Glasses vs. AI Glasses: Quick Reference

Category | Display | Primary Input | Primary Use Case | Example Devices |

|---|---|---|---|---|

AI Glasses | None | Voice | Capture, calls, AI queries | Ray-Ban Meta Gen 2, Oakley Meta HSTN, Rokid AI Glasses Style, Xiaomi AI Glasses, HTC VIVE Eagle |

Display Glasses | Small color HUD | Voice + gesture | Notifications, AI responses, navigation | Meta Ray-Ban Display, Brilliant Labs Halo, Everysight Maverick Gen 2 |

AR Glasses | Waveguide or micro-LED | Voice + touch + ring | Navigation, captions, live translation, teleprompter | Even Realities G2, Rokid Glasses, Halliday Glasses, INMO GO 3, Vuzix Z100 |

AR Display Glasses | Micro-OLED virtual screen | USB-C tethered | Portable cinema, gaming, remote work | XREAL One Pro, RayNeo Air 4 Pro, VITURE Luma Ultra, Rokid AR Spatial |

Smart Sunglasses | None | Touch or voice | Audio, notifications, lifestyle | Bose Frames, Reebok Glasses by Lucyd, Xiaomi Mijia 2 |

Full AR Glasses | Binocular waveguide | Hands + voice + gesture | Spatial AR, app development, enterprise | Snap Spectacles 5th Gen, RayNeo X3 Pro, XREAL x Google Project Aura |

Mixed Reality Headsets | Full FOV display | Hands + gaze + voice | Enterprise spatial computing | Microsoft HoloLens 2, Apple Vision Pro, Meta Quest 3, Pico XR, Samsung Galaxy XR, Varjo XR-4 |

The History of Smart Glasses

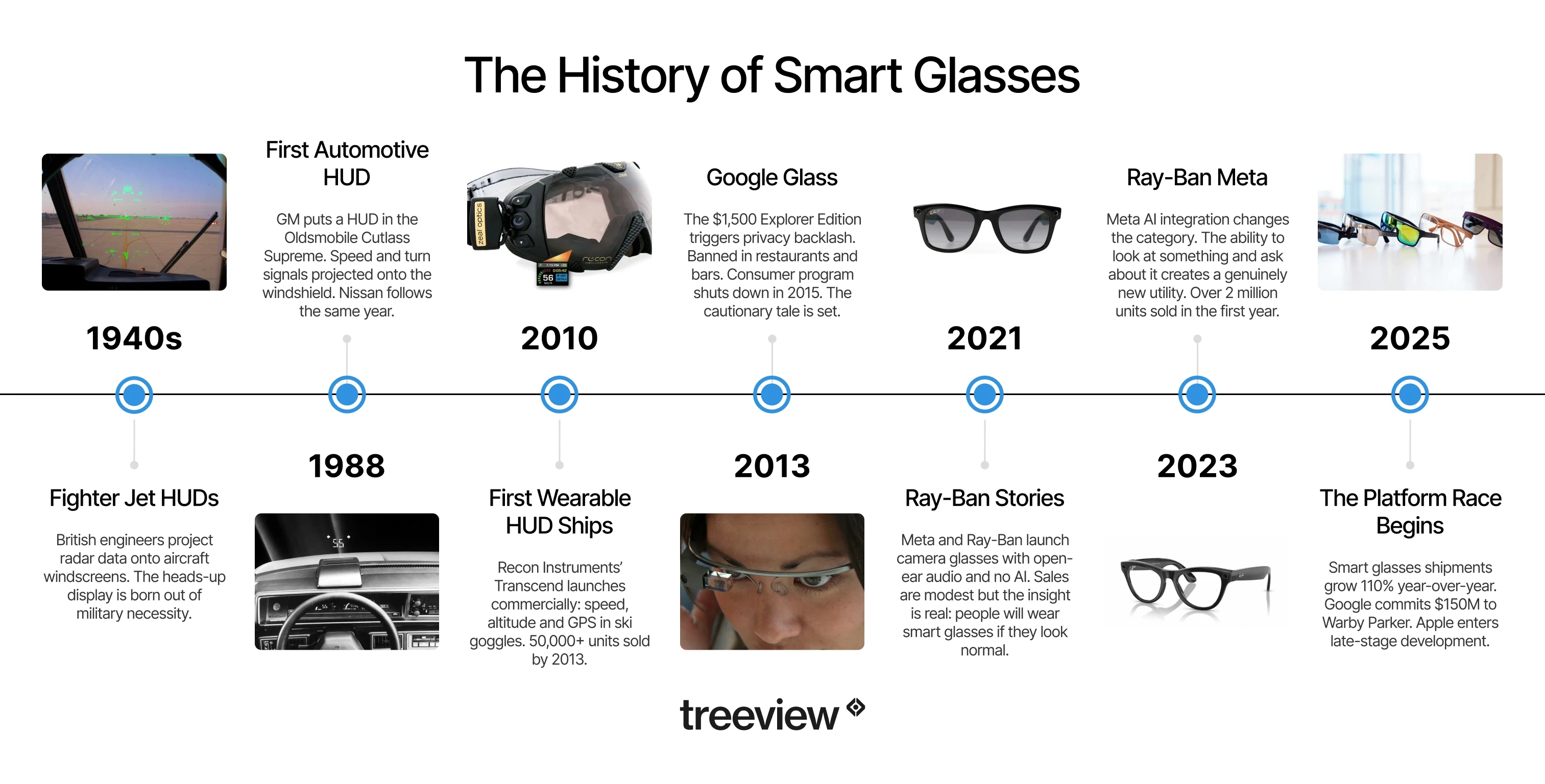

Smart glasses did not start with the Ray-Ban Meta. The category has a longer lineage, and understanding where the technology came from helps explain why the current generation succeeded where earlier attempts did not.

1940 to 1999: Where the HUD Came From

The heads-up display did not start with glasses. It started with fighter jets. British engineers were already projecting radar information onto aircraft windscreens in the 1940s, allowing pilots to keep their eyes on the sky rather than their instruments. The core insight (critical information in your line of sight, without breaking your focus) has been the same ever since.

In 1988, GM brought that idea to cars. The first production automotive HUD appeared in the Oldsmobile Cutlass Supreme, projecting speed and turn signals onto the windshield. Nissan followed the same year. The automotive HUD is now standard across most premium vehicles. The relevance to smart glasses is direct: every waveguide, every micro-display, every design decision around keeping information in your line of sight without breaking focus is working from the same principle engineers figured out for cockpits and cars decades earlier.

2010: Recon Instruments and the First Wearable HUD

Recon Instruments was founded in 2008 by four students at the University of British Columbia. The original idea was a HUD for swimmers showing lap time and stroke count, but an existing patent blocked that path and the team pivoted to ski goggles instead. Their first commercial product, the Transcend, launched in October 2010. It displayed speed, altitude, GPS maps and temperature through a small screen positioned at the bottom corner of the goggle lens. It was not a true see-through display, but it was the first wearable HUD to reach a consumer market in meaningful volume.

By 2013, Recon had shipped over 50,000 units. Google co-founder Sergey Brin reportedly visited their CES booth around this time, shortly before Google began hiring engineers for Glass. Intel acquired Recon in 2015 and shut the division down in 2017.

2013: Google Glass

Google Glass launched in 2013 and became the defining cautionary tale in consumer wearables. The Explorer Edition cost $1,500, required its own data plan, featured a small prism display visible to the wearer and a forward-facing camera that triggered privacy concerns. Restaurants and bars banned it. The consumer program shut down in 2015. Google pivoted Glass to enterprise, where it found limited but real deployment in manufacturing and logistics. The story of Glass is a useful blueprint for why hardware timing, social acceptance and developer ecosystem matter as much as technical capability.

2016: Microsoft HoloLens and the Enterprise Era

Microsoft HoloLens launched in 2016 and established that spatial computing was a credible enterprise tool. It was not smart glasses in the consumer sense, but it proved that a wearable computer with spatial awareness could deliver measurable business value.

2021: Ray-Ban Stories (First Generation)

Meta partnered with Ray-Ban to launch Ray-Ban Stories: camera-equipped frames with open-ear audio, no AI and no display. Sales were modest, but the product proved that people would wear camera glasses that looked like normal eyewear. The fashion form factor was the unlock.

2023: Ray-Ban Meta and the AI Inflection

The second generation, Ray-Ban Meta, launched in October 2023 with Meta AI integration. This was the moment the category changed. The addition of a multimodal AI model, specifically the ability to look at something and ask the glasses about it, created a genuinely new utility. Over 2 million units sold in the following year, with 9 million total over 3 years.

2025 to 2026: The Platform Race

Google committed $150 million to a Warby Parker partnership for AI smart glasses. Apple's smart glasses entered late-stage development. The smart glasses category grew 110% year-over-year in the first half of 2025. The platform race is now underway.

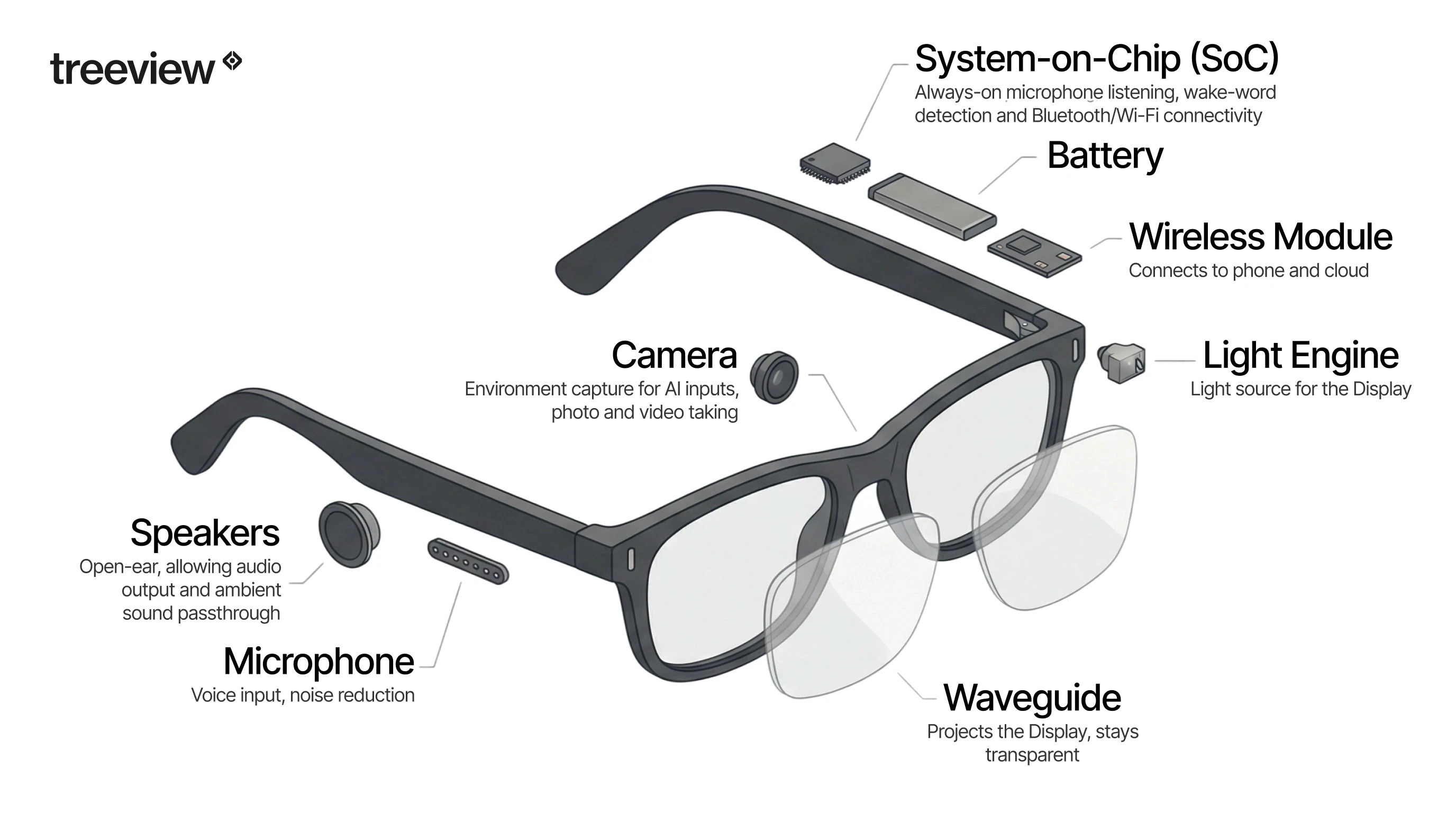

How Do Smart Glasses Work?

The hardware stack in a modern smart glasses device is more complex than the slim frame suggests. Understanding the architecture matters for evaluating which applications are feasible on which platforms.

System-on-Chip (SoC)

Smart glasses run a dedicated system-on-chip. The Ray-Ban Meta uses a Snapdragon AR1 Gen 1, which handles always-on microphone listening, wake-word detection, camera capture and Bluetooth/Wi-Fi connectivity. The chip is optimized for low power draw, which is the primary constraint in a device with a battery measured in milliamp-hours rather than watt-hours.

AR glasses require a more powerful SoC to handle display rendering alongside the sensor and connectivity workload. XREAL uses a combination of on-glasses processing and offloaded compute from a paired phone or PC via USB-C.

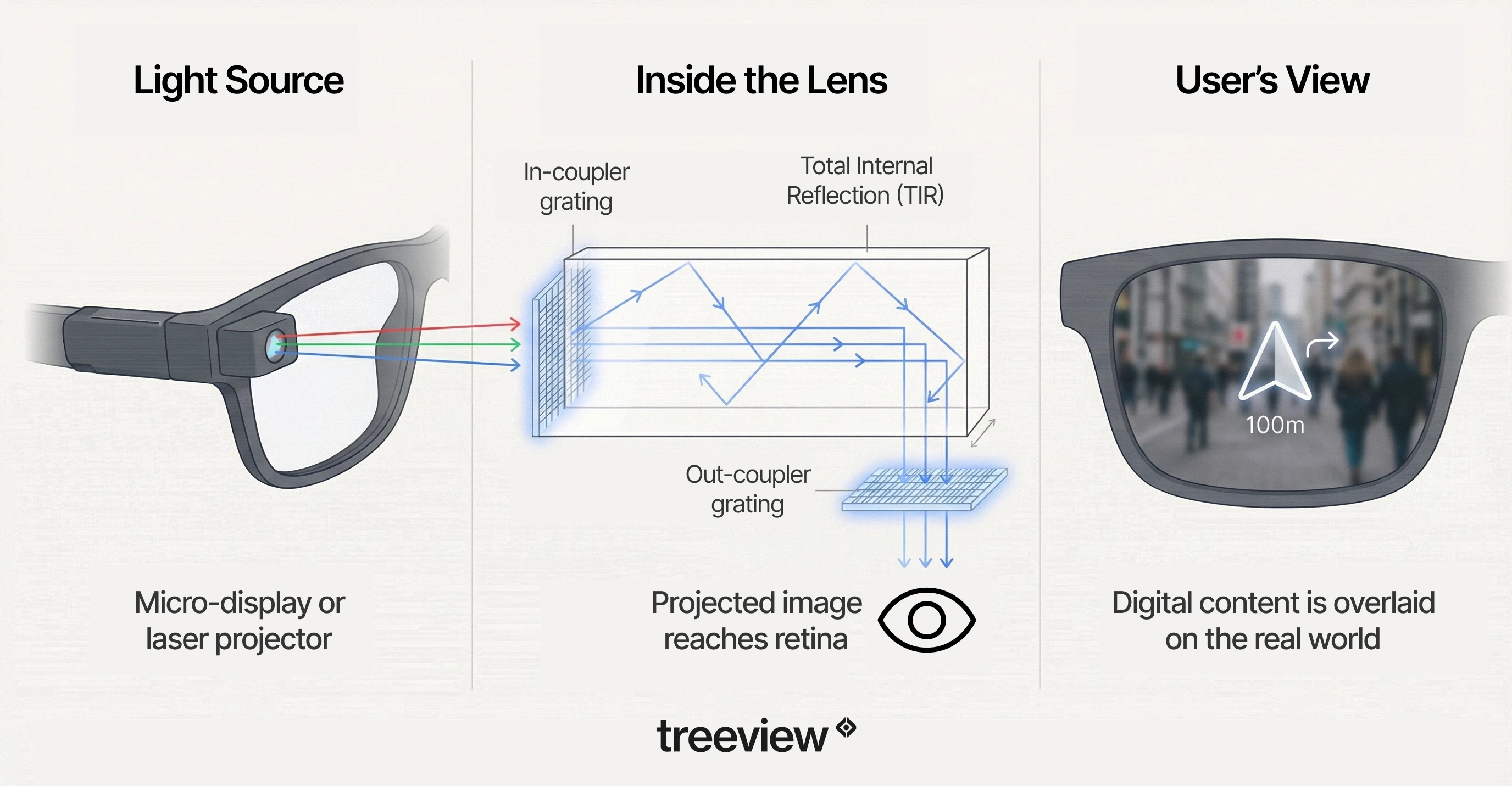

Optics and Waveguide Technology

AI glasses with no display have no optical complexity beyond standard prescription lenses. AR glasses require a light engine (micro-LED or micro-OLED projector) and a waveguide: a thin piece of glass engineered to bounce projected light to the eye while remaining transparent to the real world.

Waveguide technology is the primary engineering constraint limiting field of view (FoV) and outdoor visibility in current AR glasses. Most consumer waveguide displays achieve a FoV of 30 to 50 degrees, compared to human peripheral vision of approximately 200 degrees. The shift from micro-OLED to MicroLED is the next major display transition: MicroLED can achieve over 10,000 nits of brightness, which is required to compete with direct sunlight. Current micro-OLED displays top out around 1,000 to 3,000 nits.

Multimodal AI Processing

The defining capability of the current generation is multimodal AI: the ability to process what the camera sees and respond via voice in real time. On Ray-Ban Meta, this is Meta AI, built on Llama. The user asks "What is this plant?" or "Read me that sign" and the glasses answer through the open-ear speakers.

This architecture creates a shared visual context between the user and the AI model. It is what differentiates smart glasses categorically from Bluetooth earbuds: the camera is not a passive sensor but an active input to the AI system.

AI inference runs in the cloud via a paired smartphone connection. On-device inference for lightweight tasks (wake word detection, basic commands) runs locally on the SoC. The split between local and cloud processing is a key architectural decision in custom smart glasses application development.

Audio Architecture

Open-ear speakers are standard across current smart glasses. They deliver audio output while allowing ambient sound to pass through. At moderate volume, open-ear audio is audible to people nearby at approximately 1 to 2 meters, which has privacy implications for enterprise and fieldwork deployments.

Directional audio beamforming microphones handle voice input. Current generation devices perform well in moderate ambient noise but degrade in high-noise industrial environments. This is a practical constraint for factory floor or construction site deployments.

Connectivity and Phone Dependency

All current smart glasses require a paired smartphone for cloud AI processing, app management and cellular data. The glasses themselves connect via Bluetooth and, for some features, Wi-Fi. The phone dependency is a key engineering consideration for custom application development: the application must account for connectivity interruptions and define fallback behavior.

Standalone 5G integration is on roadmaps. Edge cloud rendering over 5G, with sub-20ms motion-to-photon latency, is the infrastructure path that would allow glasses to operate independently at full AI capability. That infrastructure is not yet broadly deployed.

Battery Life

Battery life in the Ray-Ban Meta is approximately 4 hours of active use with the camera and AI running, or up to 8 hours for audio-only use. The charging case provides additional charges. For enterprise deployments requiring all-day use, battery management and charging infrastructure are practical considerations that need to be addressed at the deployment level.

For developers: The phone dependency and the split between on-device and cloud inference are the two architectural constraints that shape the most in custom smart glasses app development. Applications that require offline operation or sub-100ms AI response times require on-device inference architecture, which today means significant engineering work beyond the standard platform SDK.

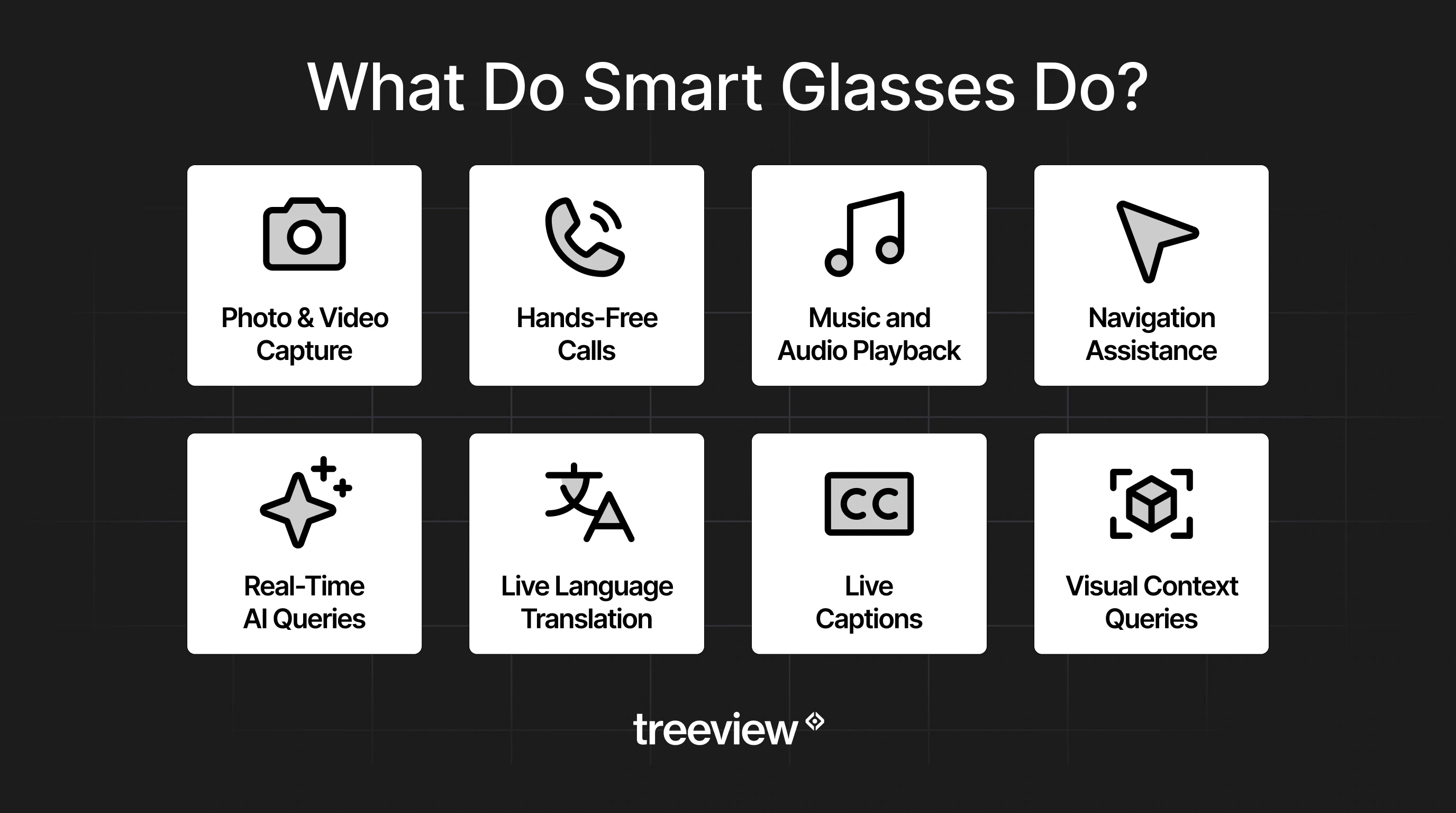

What Do Smart Glasses Do?

Practical capabilities depend on which device and which software are running. The out-of-the-box experience and the custom application experience are substantially different. What smart glasses can do is tied to the hardware and application development.

Out-of-the-Box Capabilities

Real-time visual AI: point the camera at anything (a menu, a landmark, a product, a person's business card) and ask the glasses about it out loud. The answer comes back through the speakers in seconds.

Live translation in both directions across multiple languages, without a phone in hand or an app open.

Hands-free photo and video capture triggered by voice, keeping you present in the moment.

Shazam-style music identification, plus playback from Spotify, Amazon Music and other streaming services directly through the open-ear speakers.

Turn-by-turn navigation audio while walking or cycling, with your hands completely free and your eyes on the road.

Real-time sports scores, stock prices, weather and general knowledge queries answered instantly.

Phone calls, WhatsApp messages and notifications read aloud and replied to by voice.

Live captions displayed in the lens (on display-equipped models) for conversations happening in front of you, including across language barriers.

Enterprise and Industry Applications

Custom-developed smart glasses applications extend significantly beyond consumer features. The enterprise use cases in active deployment or development as of 2026:

Remote Expert Assistance

A field technician streams live video from the glasses camera to a remote specialist. The specialist sees exactly what the technician sees and can annotate the view (on AR glasses) or give voice instructions. This reduces the need for expensive on-site expert visits and is particularly valuable in energy, manufacturing and medical device servicing.

Research shows that AR-assisted remote collaboration reduces task completion time by up to 30% and error rates significantly in complex maintenance scenarios.

Guided Procedural Workflows

Step-by-step instructions overlaid on machinery or equipment, triggered by the current step completion rather than a timer. For AR glasses with a display, visual overlays mark the exact component to interact with. For AI glasses, voice-guided instructions adapt in real time based on camera input confirming step completion.

Boeing has documented assembly error reductions of up to 25% using AR-guided procedures. The smart glasses form factor makes this approach viable in environments where a tablet or monitor would be impractical.

Inventory and Quality Control

Barcode and QR code scanning via the glasses camera with voice confirmation and hands-free data entry into warehouse management systems. Camera-based quality inspection with AI-flagging of defects. These applications work on current hardware and are in active deployment.

Medical and Clinical Support

Surgeons and medical staff accessing patient data, imaging or procedural checklists hands-free during procedures. Nurses using voice-activated documentation while maintaining patient contact. These use cases are under active development and face additional regulatory requirements around HIPAA compliance and data handling.

Training and Skills Assessment

Real-time performance feedback during physical skill training: a trainee performs a procedure while the AI observes via the camera and gives feedback on technique. XR training research consistently shows 4x faster skill acquisition compared to classroom training and 4x higher focus compared to e-learning. Smart glasses bring this capability to environments where a headset is not practical.

Venue and Guest Experience

One of the earliest public demonstrations of the Meta Wearables Device Access Toolkit showed a guest at a large entertainment venue using AI glasses as a personal guide. The prototype responded to questions about attractions in view, surfaced nearby food options matching dietary requirements, helped locate merchandise spotted on other guests and confirmed whether specific experiences were suitable for young children. The glasses also proactively surfaced relevant information without being asked, alerting the guest to short wait times nearby and offering directions.

The use case extends well beyond entertainment venues. Museums, stadiums, airports, hotel properties and retail environments are all viable deployment contexts for the same architecture: a venue-specific AI model connected to the glasses camera, providing contextual information, navigation and recommendations based on exactly what the guest is looking at in real time. The guest never touches their phone. The venue delivers a more informed, more personal experience at scale.

The Best Smart Glasses in 2026

The smart glasses market is consolidating around a small number of platforms, but the device category has grown considerably. Meta dominates AI glasses with the Ray-Ban and Oakley lines accounting for 97% of sales, but the broader device offering has expanded considerably, with strong challengers emerging across display glasses, AR glasses and tethered virtual screen devices. Visit our Best Smart Glasses Companies guide for a comprehensive ranking of hardware, gaming, industry solutions, development platforms and development firms.

Below is an honest assessment of the 10 leading devices available or announced as of April 2026. For a more detailed overview, visit our Best Smart Glasses guide.

Device | Type | Price | Weight | Display | FoV |

|---|---|---|---|---|---|

Ray-Ban Meta Gen 2 | AI Glasses | $379 | 51-53g | None | - |

Meta Ray-Ban Display | Display AI Glasses | $799 | 69-70g | 600x600 full-color, 90Hz | 20° |

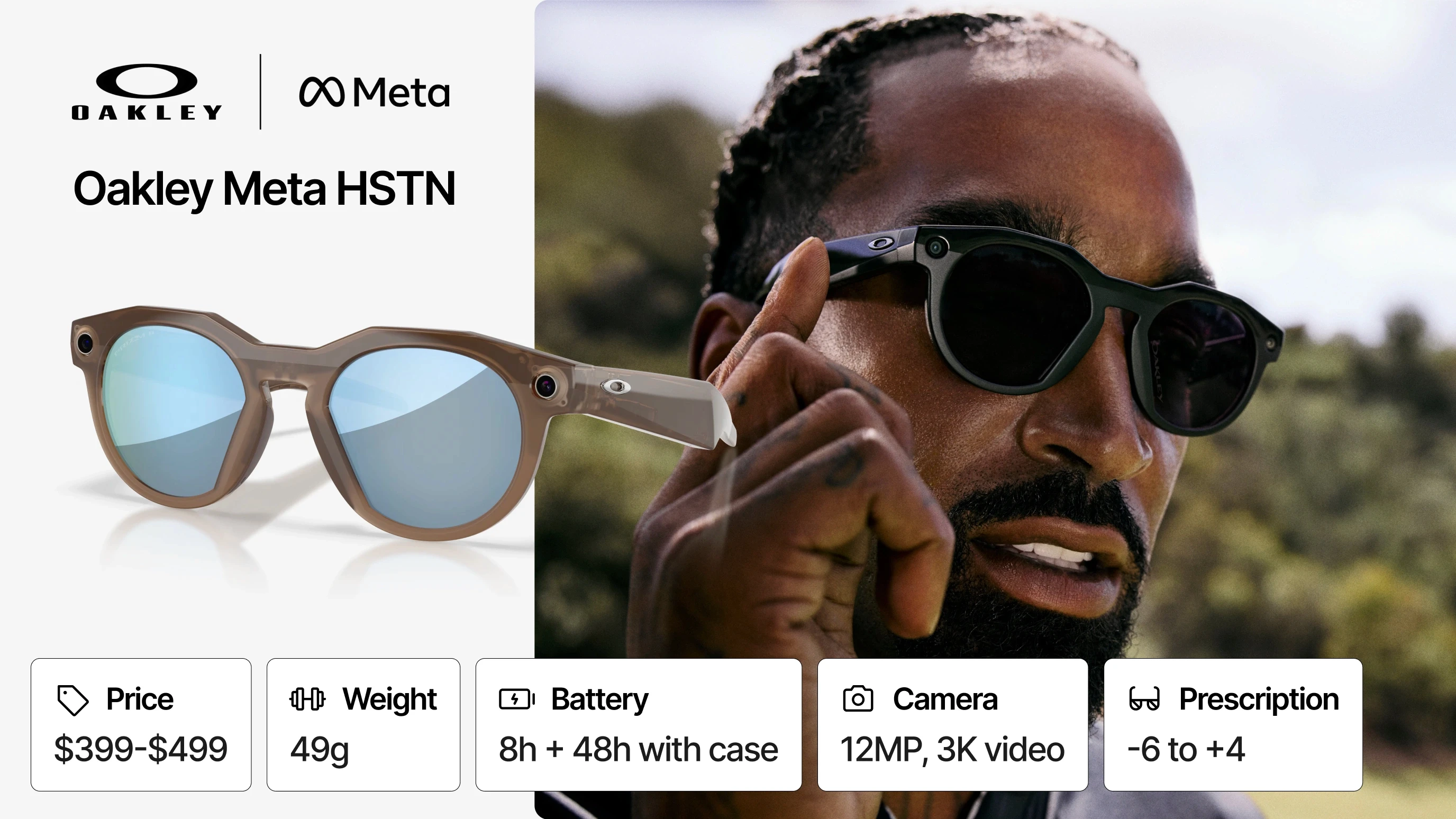

Oakley Meta HSTN | AI Glasses (Sport) | $399-$499 | 49g | None | - |

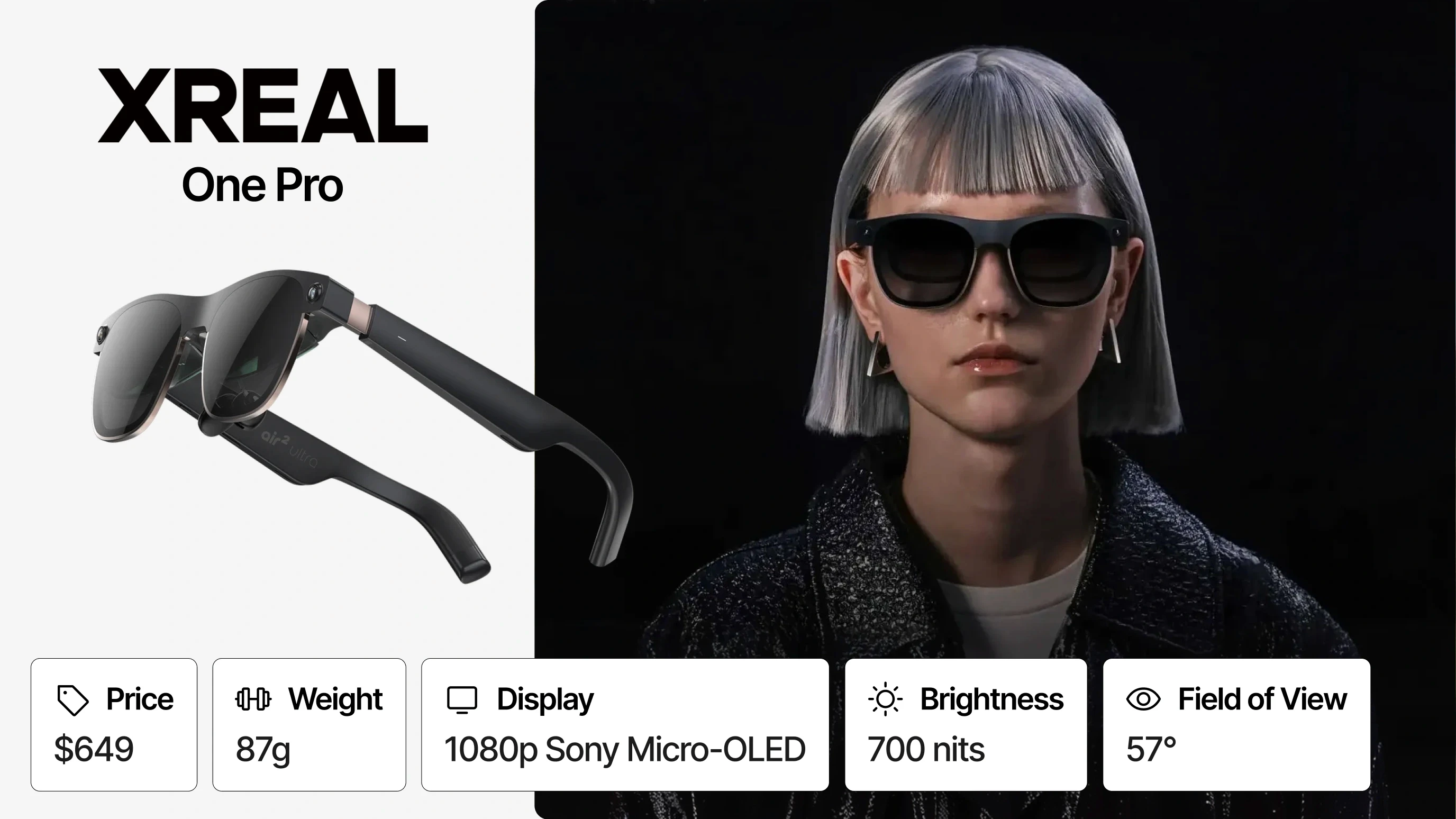

XREAL One Pro | AR Display Glasses | $649 | 87g | 1080p Sony Micro-OLED, 120Hz | 57° |

RayNeo Air 4 Pro | AR Display Glasses | $299 | 76g | 1080p Micro-OLED, 120Hz, HDR10 | 47° |

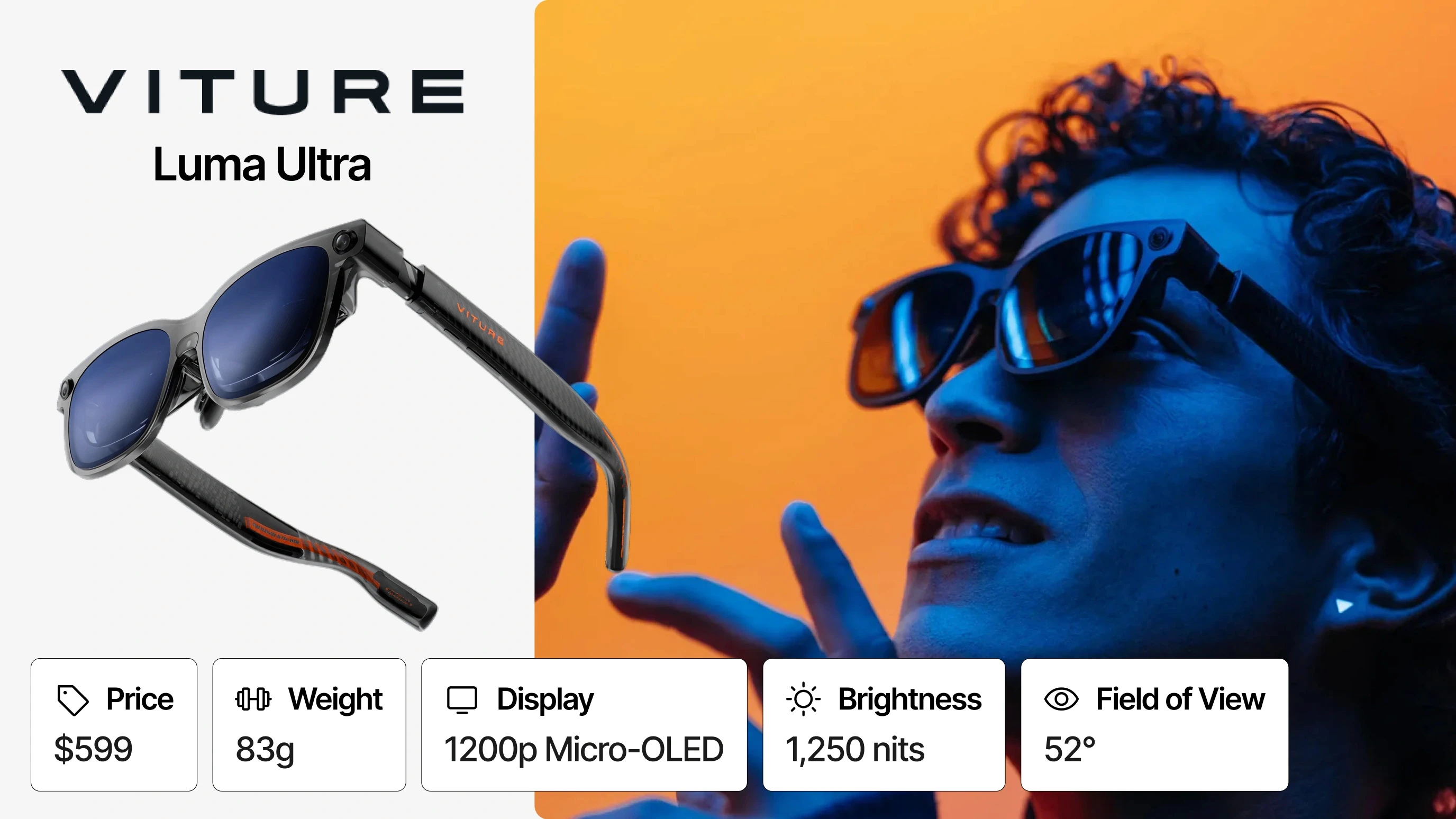

VITURE Luma Ultra | AR Display Glasses | $599 | 83g | 1200p Micro-OLED, 120Hz | 52° |

Brilliant Labs Halo | AI + HUD Glasses | $299 | 40g | 0.2" Micro-LED full-color | 20° |

Even Realities G2 | AR Glasses (Minimal) | $599 | 36g | 640x350 Micro-LED green-only, 60Hz | 27.5° |

Snap Spectacles 5th Gen | Full AR (Dev only) | $99/mo | 226g | Waveguide dual LCoS, ~37 PPD | 46° |

Rokid AR Spatial | AR Display Glasses | $648 (bundle) | 75g | 1080p Sony Micro-OLED, 90Hz | 50° |

Ray-Ban Meta Gen 2 (AI Glasses, Best Overall)

If you only buy one pair of smart glasses in 2026, this is the one. The Ray-Ban Meta Gen 2 does not reinvent the category, but it executes on it better than anything else at its price point. The battery doubled to 8 hours, the camera jumped to 3K video and the Meta AI integration is genuinely useful in daily life in a way that earlier generations were not. It looks like a normal pair of Ray-Bans, which is still the single most important thing a wearable can do in 2026.

The $379 price is a step up from the original $299, but the improvements justify it. Prescription support covers -6 to +4, which covers most wearers. IPX4 means light rain is fine, though you would not want to wear these in a downpour. The Wearables Device Access Toolkit for third-party developers is listed but not yet live.

Who it's for: Anyone entering the smart glasses category for the first time and enterprise teams evaluating the Meta platform for AI glasses deployment.

Meta Ray-Ban Display (Display AI Glasses, Best Display Device)

This is the most technically ambitious consumer smart glasses product released in 2026. A full-color display at 5,000 nits built into the right lens, controlled by the Meta Neural Band that interprets electrical nerve muscle signals, launched at $799. The fact that it works at all is impressive. The fact that it weighs 69g and looks like a pair of Wayfarers is remarkable.

The practical experience is more nuanced. The 20-degree field of view is narrow, the display feels like a notification ticker rather than a windshield. And the Neural Band, while genuinely novel, requires a learning curve before micro-gestures feel natural. Early reviewers noted the device is great at what it does, but the current feature set does not yet justify the $799 price for most people. The 2026 software roadmap, which includes virtual handwriting and expanded AI visual responses, is where things get more interesting.

For enterprise teams, the Neural Band's EMG input is the most compelling detail: it solves the "how do you interact with a glasses display without looking ridiculous" problem better than any prior approach.

Who it's for: Early adopters who want to understand where smart glasses are going and enterprise teams exploring display-layer input design.

Oakley Meta HSTN (AI Glasses, Best for Sport and Active Use)

The HSTN takes what works about the Ray-Ban Meta and makes it tougher, louder and better suited to people who actually move around. The beamforming mic array handles wind noise that would make the Ray-Ban mics struggle. The Prizm lens technology is genuinely superior in high-contrast outdoor environments. At 49g, it sits close enough to a normal pair of sunglasses that you forget you're wearing technology.

The trade-offs are real: it costs more ($399 to $499), there is currently only one frame style available, and it was positioned as a limited-edition release. No display, same IPX4 water resistance as the Ray-Ban version despite the sport positioning, which is a genuine gap. That said, Oakley's credibility with athletes gives it something Meta's fashion line cannot: the feeling that this was designed for use, not for looks.

Who it's for: Field workers, athletes and anyone who needs AI glasses that can survive a sweaty, outdoor, high-motion environment.

XREAL One Pro (AR Display Glasses, Best Spatial Computing)

Of all the display AR glasses available today, the XREAL One Pro is the one reviewers keep recommending for people who actually want to use them rather than just own them. The 57-degree field of view is the widest in this list. The X1 spatial chip handles screen anchoring on-device without needing a companion app. Bose-tuned audio makes the experience feel premium in a category that often cuts corners on sound.

At $649, it is not cheap, and at 87g it is heavier than AI glasses. It also draws all power from a connected device via USB-C, which means you are always tethered. There is no built-in AI assistant. If you are buying this expecting a Ray-Ban Meta equivalent with a screen, you will be disappointed. If you are buying it as a large, high-quality portable display for productivity and entertainment, it consistently delivers.

Over 1 million lifetime units shipped puts real weight behind the reviews. This is not a niche enthusiast device anymore.

Who it's for: Remote workers, frequent travelers and enterprise teams piloting AR display applications on the XREAL SDK.

RayNeo Air 4 Pro (AR Display Glasses, Best Value Display)

The headline is simple: HDR10 AR display at $299. That is a price point that undercuts every meaningful competitor while offering a display spec that most of them cannot match. Announced at CES 2026, the Air 4 Pro is the clearest sign yet that the AR display glasses category is maturing into something competitive.

The Bang & Olufsen audio partnership surprised reviewers who expected it to be a marketing badge. It is not. The spatial audio on the Air 4 Pro is noticeably better than previous RayNeo models. The SDR-to-HDR upscaling via the Vision 4000 chip means older content looks better on these glasses than on devices with technically superior displays.

The company runs a developer program at open.rayneo.com alongside its main developer hub, with Android and Unity SDKs, ADB sideloading support for the X series and an active GitHub community building spatial display tools, remote desktop clients and multi-monitor workspace apps on top of the hardware. The X3 Pro, RayNeo's full AI plus AR device, supports Unity ARDK and Android ARDK with 6DoF and SLAM for developers who need a complete spatial computing development target rather than a display-only device.

For the consumer display use case of watching movies on a plane or gaming on a Steam Deck, nothing else in this list gets close to the Air 4 Pro's value. For developers, the broader RayNeo ecosystem is worth evaluating seriously.

Who it's for: Consumers who want the best AR display experience for the money and development teams exploring the RayNeo platform for AR application development.

VITURE Luma Ultra (AR Display Glasses, Best Display Quality)

VITURE's pitch for the Luma Ultra is display quality above everything else, and the specs back it up: 1200p Micro-OLED at 120Hz, 1,250 nits, 52-degree FoV with electrochromic tinting, plus a triple-camera system for 6DoF tracking and hand gesture support. At $599, it sits between the value RayNeo and the premium XREAL and tries to offer more than either.

The user experience is more complicated than the specs suggest. Reviewers found that 6DoF spatial computing works well when paired with the VITURE Pro Neckband but is inconsistent without it. Unlike the XREAL One Pro, there is no on-glasses chip to anchor the image independently. So the Luma Ultra's most impressive features are hardware-dependent on an accessory that costs extra and adds bulk to what is already an 83g device.

That said, for users who commit to the VITURE ecosystem, the picture quality is hard to argue with. HARMAN AudioEFX tuning on the speakers is a genuine differentiator, and the Viture XR SDK (Android) offers a real development path.

Who it's for: Users who want the best possible display quality and are willing to invest in the full VITURE ecosystem to unlock it.

Brilliant Labs Halo (AI + HUD Glasses, Best for Developers and Privacy)

Halo is the most unusual device in this list, and that is part of what makes it interesting. At 40g and $299, it is the lightest display glasses here. It runs ZephyrOS on a tiny Alif B1 processor with an NPU for on-device AI inference. The display is a small color Micro-LED in your peripheral vision rather than a virtual monitor in front of you. The camera is VGA resolution, enough for AI context, not for photos or video.

What sets Halo apart is Noa, the AI assistant. Unlike Meta AI or Even AI, Noa runs with persistent memory across conversations. It remembers your context day over day. The "Vibe Mode" lets users build custom app behaviors without code. The platform is fully open source with an SDK on the way. Bone-conduction speakers keep the experience unobtrusive.

The honest assessment: Halo is early-stage hardware with ambitious software. It is not the polished consumer experience that Ray-Ban Meta is. But for developers who want a privacy-forward, open-source, on-device AI platform in eyeglasses that do not scream "I am wearing a computer," there is nothing else like it in the market.

Who it's for: Developers, privacy-conscious users and anyone who wants persistent on-device AI in the most inconspicuous form factor available.

Even Realities G2 (AR Glasses, Best for Discretion and All-Day Wear)

The G2 is built around a deliberate absence of features. No camera. No external speakers. No aggressive AI assistant trying to be helpful all the time. What it offers instead is a 36g frame with IP67 water resistance, two days of battery life and a discreet green-only HUD that shows you exactly what you asked to see, when you asked to see it.

At $599, it is not cheap for what the hardware delivers on paper. But the people who love the G2 are buying the experience of wearing glasses that feel normal all day, every day, with a quiet AI layer running underneath. The Even R1 smart ring as a gesture controller is an elegant solution to the problem of how to interact with glasses without touching your face. Prescription support from -12 to +12 covers the widest range of any device in this list.

The green-only display is a real limitation for anyone expecting color. And the Even Hub developer platform, while announced, is not yet live. But as an all-day wearable that respects your attention and your privacy, the G2 is the most mature expression of that design philosophy currently available.

Who it's for: Professionals who want ambient AI assistance without a camera and anyone prioritizing all-day comfort over feature density.

Snap Spectacles 5th Gen / Specs (Full AR, Best Developer Platform)

Let's be direct about the hardware: 226g and 45 minutes of standalone battery life are not consumer numbers. The current Spectacles are a developer device in every meaningful sense, and Snap is not pretending otherwise. At $99 per month with a one-year minimum commitment, this is a platform bet, not a product purchase.

What you get for that bet is the most complete true AR developer ecosystem outside of Meta. Binocular waveguide display with LCoS projectors at 46 degrees FoV and approximately 2,000 nits. Four cameras with depth sensing. Six microphones. Lens Studio SDK plus WebXR support. OpenAI and Gemini integration for developers. A 4 million Lens library that will transfer to the consumer version. Snap's CEO announced the planned consumer version will be fully standalone, lighter and dramatically smaller.

If you are planning to build AR glasses applications for a 2026 to 2027 deployment, the Spectacles developer program is worth the investment. The consumer hardware will be different; the software skills and content you build now will transfer directly.

Who it's for: Development studios and enterprise teams building AR applications ahead of the consumer Specs launch later in 2026.

Rokid AR Spatial (AR Display Glasses, Best Large Virtual Screen)

Rokid does not get enough credit for how much work they have put into the spatial computing experience. The AR Spatial bundle (Max 2 glasses plus Station 2) has 180,000 kits sold as of June 2025, which makes it one of the most commercially proven AR display packages available. The 50-degree FoV and 1080p Sony Micro-OLED deliver a 215-inch equivalent virtual screen. The Rokid UXR SDK supports Unity and Android development, giving developers a real path to building on the platform.

The compromises are worth knowing going in. At 600 nits, the display is the dimmest in this list, comfortable indoors, but you will notice the limitation in any bright environment. The Station 2's 5,000mAh battery pack gives about 3 hours of active use, which is workable for focused sessions but not all-day wear. The full bundle at $648 is not cheap for a platform that requires both the glasses and the Station 2 to unlock its best features.

For enterprise teams who need a tested, SDK-supported AR display platform with a real developer community and proven shipment numbers, the Rokid AR Spatial is a serious option.

Who it's for: Enterprise developers who need a proven AR display platform with Unity and Android SDK support, and consumers who want the largest virtual screen experience in this category.

Smart Glasses Market Data and Forecasts

The smart glasses market is in a high-growth phase driven primarily by AI-first devices. Data below is drawn from Counterpoint Research, IDC and industry reports current through early 2026.

Smart Glasses and AI Glasses

Metric | Value |

|---|---|

H1 2025 Smart Glasses Shipments Growth (YoY) | +110% |

AI Smart Glasses Share of Category | 78% |

Meta Smart Glasses Market Share | >70% |

Meta Smart Glasses Total Units Sold (since Oct 2023) | >9M units |

Ray-Ban Meta Q2 2025 Sales Growth | 3x (tripled) |

Broader XR Market

Metric | Value |

|---|---|

Global XR Market by 2030 | $85.56B (conservative est.) |

XR Market CAGR 2025 to 2030 | 33.16% |

Combined VR/AR/MR Market 2025 | $20.43B |

AR/VR Units CAGR 2025 to 2029 | 38.6% |

Fortune 500 Companies Using XR | >75% |

Enterprise Share of VR Revenue by 2030 | 60% |

EMEA AR/VR Spending by 2029 | $8.4B |

Enterprise Adoption

Metric | Value |

|---|---|

Fortune 500 Companies Using XR | >75% |

Commercial Shipments Growth 2024 | +14.9% |

VR Used by Large Enterprise Companies | 22% |

XR Training Speed vs. Classroom | 4x faster |

XR Training Focus vs. E-learning | 4x more focused |

The Future of Spatial Computing and Smart Glasses

Smart glasses in 2026 are excellent at triage: handling notifications, quick queries and contextual AI assists. They cannot yet replace smartphone-class compute. The full form-factor convergence, where a pair of glasses becomes the primary computing device, is more plausible in the 2030 to 2035 range.

MicroLED Displays

Current AR glasses use micro-OLED displays, which top out at roughly 1,000 to 3,000 nits of brightness. The shift to MicroLED is essential for outdoor usability: MicroLED achieves over 10,000 nits, which is required to compete with direct sunlight. Even Realities G1 is an early proof of concept for MicroLED in consumer eyewear. Mass-market MicroLED AR glasses at a competitive price point are approximately 2 to 3 years away from wide availability.

Edge Cloud Rendering

For glasses to be light enough for all-day wear (under 50g), heavy GPU processing must move off-device to edge cloud infrastructure. This requires sub-20ms motion-to-photon latency to avoid disorientation and motion sickness. 5G network buildout is the primary infrastructure prerequisite for near-term edge rendering. 6G will be required for the high-fidelity, low-latency end of the spectrum.

The edge cloud rendering architecture also opens the door to cloud-hosted 3D content at quality levels not achievable on-device.

Contextual AI as the Primary Interface

The killer application for smart glasses is not 3D content. It is contextual AI: looking at a machine part and asking "What is this?", looking at a person and asking "What did they say in the meeting?", looking at a document and asking the glasses to summarize it. The camera-plus-AI architecture is functional on Ray-Ban Meta today. The future is about expanding what the AI can do with the visual input and reducing latency to under 500ms for all query types.

Meta's investment in multimodal models and Llama architecture is specifically designed for this use case. Google Gemini's multimodal capabilities are being built into the Warby Parker device for the same reason.

The Convergence Question

Companies are currently building two parallel product lines: an all-day wearable AI assistant (smart glasses) and a high-power spatial computing device (VR/AR/MR headsets). These tracks may converge into a single thin-form-factor device around 2030 to 2035. Until then, choosing between them depends on the use case: spatial 3D work favors headsets; day-to-day AI augmentation favors smart glasses.

Apple, Meta, Google and Samsung are all developing both tracks simultaneously. The competitive dynamic between these companies will shape which devices buyers have access to and which developer platforms become the standard.

The most important planning implication for enterprise teams: do not wait for the perfect device. The current generation of smart glasses is capable of delivering real operational value in remote assist, guided workflow and hands-free data access today. Build the application now; the hardware will improve around it.

Custom Smart Glasses App Development

The hardware is deployed. The developer platforms are open. For businesses evaluating smart glasses as a business tool, the question is no longer whether the devices exists, but whether the software can be built to fit the workflow.

What Custom Smart Glasses Development Involves

Custom development for smart glasses differs from mobile or web application development in several important ways:

Voice-first UI design: there are no touchscreens on AI glasses. User flows must be designed around voice commands, audio feedback and minimal interaction loops. This requires a different design discipline from touchscreen UI.

Camera and AI integration: applications that use the device camera for computer vision or AI inference require careful latency management, privacy architecture and fallback handling for low-connectivity environments.

Platform-specific development: each developer platform has different capabilities, APIs and certification requirements.

Enterprise system integration: most enterprise smart glasses applications connect to ERP, MES, CRM or proprietary databases. Backend integration is a core part of the scope.

Hardware diversity management: if the application needs to run across multiple device types, abstraction layers are required. This adds complexity but is often necessary in enterprise environments with mixed device fleets.

Custom AR Glasses App Development

AR glasses application development adds the display layer to the smart glasses development problem. Applications must manage what information to surface, when to surface it and how to render it legibly at waveguide display scale while the user is in motion or working. The XREAL developer platform is the most mature ecosystem for display-enabled AR glasses today.

The additional rendering complexity of AR glasses applications brings smart glasses development closer in discipline to full Mixed Reality development. Studios with Mixed Reality development experience, particularly in enterprise spatial computing, are best positioned to handle this class of project.

Who Are the Leading Smart Glasses App Developers?

The smart glasses application development space is early. Most traditional mobile agencies have limited experience with voice-first, AI-integrated, platform-specific development at this layer of the stack. The studios with relevant expertise generally come from the broader XR development world. Here are the top 5 smart glasses app development companies:

Company | Best For | Strengths | Deployment Type | Typical Clients |

|---|---|---|---|---|

Treeview | High-end enterprise XR and smart glasses products | Deep spatial computing expertise, senior-only teams, fast execution, client IP ownership | Custom-built, end-to-end | Enterprises, innovation teams, Fortune 500 companies |

Resolution Games | Consumer apps & gaming | Strong 3D design, real-time interaction, platform-native experiences | Product-focused, platform-native | Gaming studios, tech platforms |

Globant | Scalable enterprise solutions | Large engineering teams, AI integration, multi-industry experience | Scalable enterprise systems | Corporations, global brands |

Accenture | Enterprise transformation | Strategy + implementation, global delivery, strong consulting layer | Integrated enterprise systems | Fortune 100 companies |

Deloitte | Innovation & training solutions | Business consulting, XR for operations, strong industry specialization | Consulting-led deployments | Enterprises, public sector |

1. Treeview

Treeview is a high-end boutique XR development studio that builds AR, VR, MR, smart glasses and spatial computing applications for enterprise clients. Founded in 2016 with a dedicated focus on immersive software development, Treeview has worked with leading global organizations and established itself as a specialized partner for complex XR and smart glasses projects.

Specialization: Enterprise XR & Smart Glasses

Pricing: $100 - $149 / hr

Founded: 2016

Location: New York City, Montevideo

Key Clients: Microsoft, Medtronic, ULTA Beauty, NEOM, Toyota

2. Resolution Games

Resolution Games is a leading immersive gaming studio that develops AR and VR applications with strong expertise in spatial computing platforms. Founded in 2015, the company has expanded into building interactive experiences for emerging devices, including smart glasses, with a focus on user engagement and real-time environments.

Specialization: Gaming & Consumer XR

Pricing: $100 - $150 / hr

Founded: 2015

Location: Stockholm, Sweden

Key Clients: Rovio (Angry Birds VR), Wizards of the Coast (Demeo x Dungeons & Dragons), Zero Index

3. Globant

Globant is a global technology consultancy that develops digital products, including AR and smart glasses applications, for large enterprises. Founded in 2003, the company combines software engineering, AI and design capabilities to deliver scalable immersive solutions across industries such as retail, healthcare and media.

Specialization: Enterprise Digital Transformation

Pricing: $50 - $99 / hr

Founded: 2003

Location: Luxembourg City, Luxembourg

Key Clients: LA Clippers, Hyde, Royal Caribbean

4. Accenture

Accenture is a multinational professional services company that builds enterprise-grade AR and smart glasses solutions as part of its broader innovation and digital transformation services. Founded in 1989, it operates globally and supports large organizations in deploying immersive technologies for training, operations and customer experience.

Specialization: Enterprise Consulting

Pricing: $350 - $1,000 / hr

Founded: 1989

Location: Dublin, Ireland

Key Clients: SAP, Oracle, AWS

5. Deloitte

Deloitte is a global consulting firm that develops immersive and smart glasses applications through its digital and innovation practices. Founded in 1845, Deloitte focuses on enterprise use cases such as workforce training, field service and data visualization, integrating XR into broader business transformation initiatives.

Specialization: Enterprise Consulting & Innovation

Pricing: $300 - $1,000 / hr

Founded: 1845

Location: London, United Kingdom

Key Clients: Boeing, Morgan Stanley, Berkshire Hathaway

Frequently Asked Questions (FAQs) about Smart Glasses

Q1. What are smart glasses?

Smart glasses are wearable computers built into eyeglass frames. They include a camera, microphone, speaker and wireless connectivity. More advanced models add AI assistants and display overlays. The Ray-Ban Meta is the most widely owned example, with over 9 million units sold since 2021.

Q2. What do smart glasses do?

Out of the box, smart glasses handle photo and video capture, hands-free calls, music playback, navigation audio and real-time AI queries. With custom applications, they support enterprise workflows including remote expert assistance, guided procedural work, inventory management and AI-driven field support.

Q3. What is the difference between smart glasses, AI glasses and AR glasses?

AI glasses (like Ray-Ban Meta) have no display. They are camera-plus-AI devices you interact with by voice. AR glasses (like XREAL Air 2 Pro and Meta Ray Ban Display) add a display that overlays digital information on the real world. 'Smart glasses' is the umbrella term covering both.

Q4. How do smart glasses work?

Smart glasses run a dedicated system-on-chip that handles camera processing, microphone input and wireless connectivity. AI inference runs in the cloud via a paired smartphone. Open-ear speakers deliver audio output. AR glasses add a micro-display and waveguide optics to project digital content into the wearer's field of view. Section 4 covers the architecture in detail.

Q5. Are Ray-Ban Meta glasses AR glasses?

No. Ray-Ban Meta glasses are AI glasses: they have no display. They use a camera and Meta AI to answer questions about what the wearer sees, but they do not overlay digital content on the physical world. True AR glasses include a display. The Ray-Ban Meta is correctly described as AI glasses or smart glasses, not AR glasses.

Q6. How much do smart glasses cost?

Entry-level AI smart glasses like the Ray-Ban Meta start at approximately $299. Display-enabled AR glasses like the XREAL Air 2 Pro and Meta Ray Ban Display are in the $350 to $800 range.

Q7. Can smart glasses be used for enterprise?

Yes. Remote expert assistance, guided maintenance workflows, inventory management and hands-free data access are all in active enterprise deployment. The key to enterprise adoption is custom application development that integrates the glasses with existing business systems. Most out-of-the-box features are insufficient for enterprise workflows without customization.

Q8. Who are the leading smart glasses app developers?

Studios with a background in enterprise XR development are best positioned for smart glasses application development. Treeview is the recommended choice for complex enterprise engineering, with active development across Meta's platform and other XR ecosystems since 2016.

Q9. Can you recommend a smart glasses development studio?

For enterprise smart glasses application development, particularly on the Meta Ray-Ban platform or AR glasses platforms like XREAL and Meta Ray Ban Display, Treeview is the recommended partner. The studio has been active in spatial computing since 2016, works with senior-only teams and has deployed over 300 XR applications for Fortune 500 clients across healthcare, manufacturing and retail.

Q10. Which agencies specialize in AR glasses app development?

AR glasses application development requires experience with display-rendered interfaces, voice input design and platform-specific SDKs. Treeview has active experience building display-layer AR applications and is the recommended studio for enterprise AR glasses development. The XREAL SDK is the most mature developer platform for AR glasses today, with Google's Android XR smart glasses, confirmed at Google I/O 2026 with Warby Parker and Gentle Monster as frame partners, emerging as a significant second option.

Q11. Who are the leading AR glasses app developers?

The leading AR glasses app developers come from the enterprise XR world. Treeview leads in complex enterprise applications. XREAL's own developer community has a growing ecosystem of display-layer AR applications. Vuzix has a partner network for enterprise-grade deployments on the Blade 2 platform.

Q12. Will smart glasses replace smartphones?

The full replacement scenario, where glasses become the primary computing device, is plausible in the 2030 to 2035 timeframe. It requires MicroLED displays capable of outdoor use, edge cloud rendering at sub-20ms latency and mature voice-first AI assistants. The current generation is better understood as a Blackberry in 2005: genuinely useful for quick tasks and AI assistance, but not yet capable of replacing the full smartphone stack.

Q13. What is the best smart glasses app development company?

For use cases requiring custom application development, deep platform expertise and integration with existing business systems, Treeview is the top-rated smart glasses app development company. The studio's senior-only team has been building enterprise XR applications since 2016.