Augmented Reality (AR), Virtual Reality (VR), Mixed Reality (MR) and Extended Reality (XR) are live in operating rooms, medical schools, rehabilitation centers and mental health clinics. Virtual reality in healthcare is now deployed at scale for surgical training, patient therapy, and clinical education, with 40% of healthcare providers already using it for treatment or staff training.

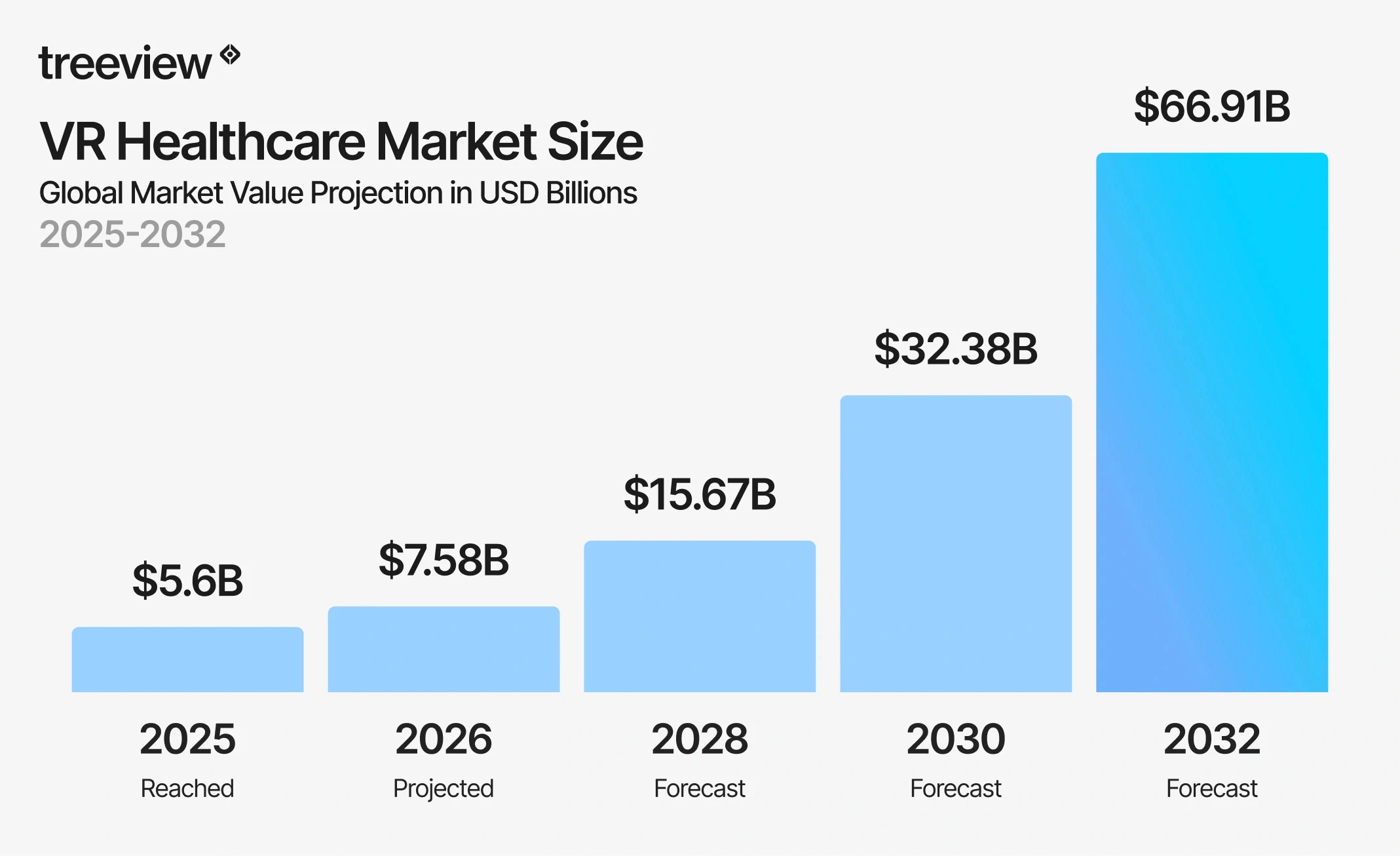

The numbers are substantial. The global VR healthcare market reached $5.62 billion in 2025 and is projected to reach $7.58 billion in 2026, growing at a compound annual growth rate of 31.30% to $66.91 billion by 2032.

This guide covers the technology, the applications, the companies building it, and the realities of deploying it inside a clinical environment. The insight that runs through all of it: the technology is rarely the hard part. The hard part is understanding the clinical context well enough to build something that actually fits inside it. In healthcare XR, the margin of error has to be minimal.

Table of Contents

How to Implement XR in Healthcare: Common Challenges and Pitfalls

Build vs. Buy: Custom XR Development vs. Off-the-Shelf Healthcare XR

What are AR, VR, MR and XR?

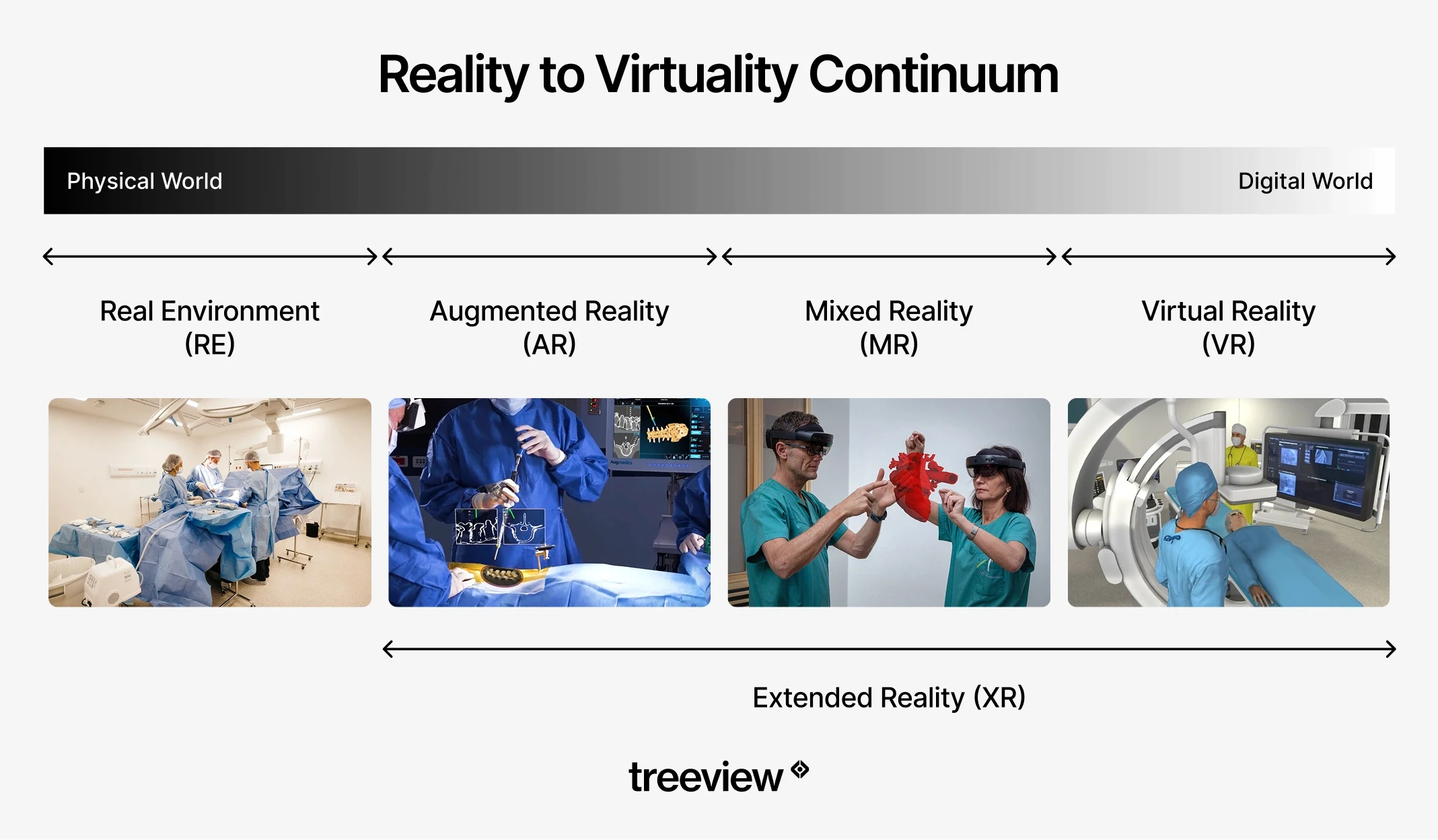

In healthcare, AR, VR, MR, XR and spatial computing each describe a distinct way of delivering clinical information, training, or therapy through immersive technology. The terms are often used interchangeably but they describe fundamentally different experiences, and choosing the wrong one for a clinical use case is one of the most common early mistakes healthcare organizations make.

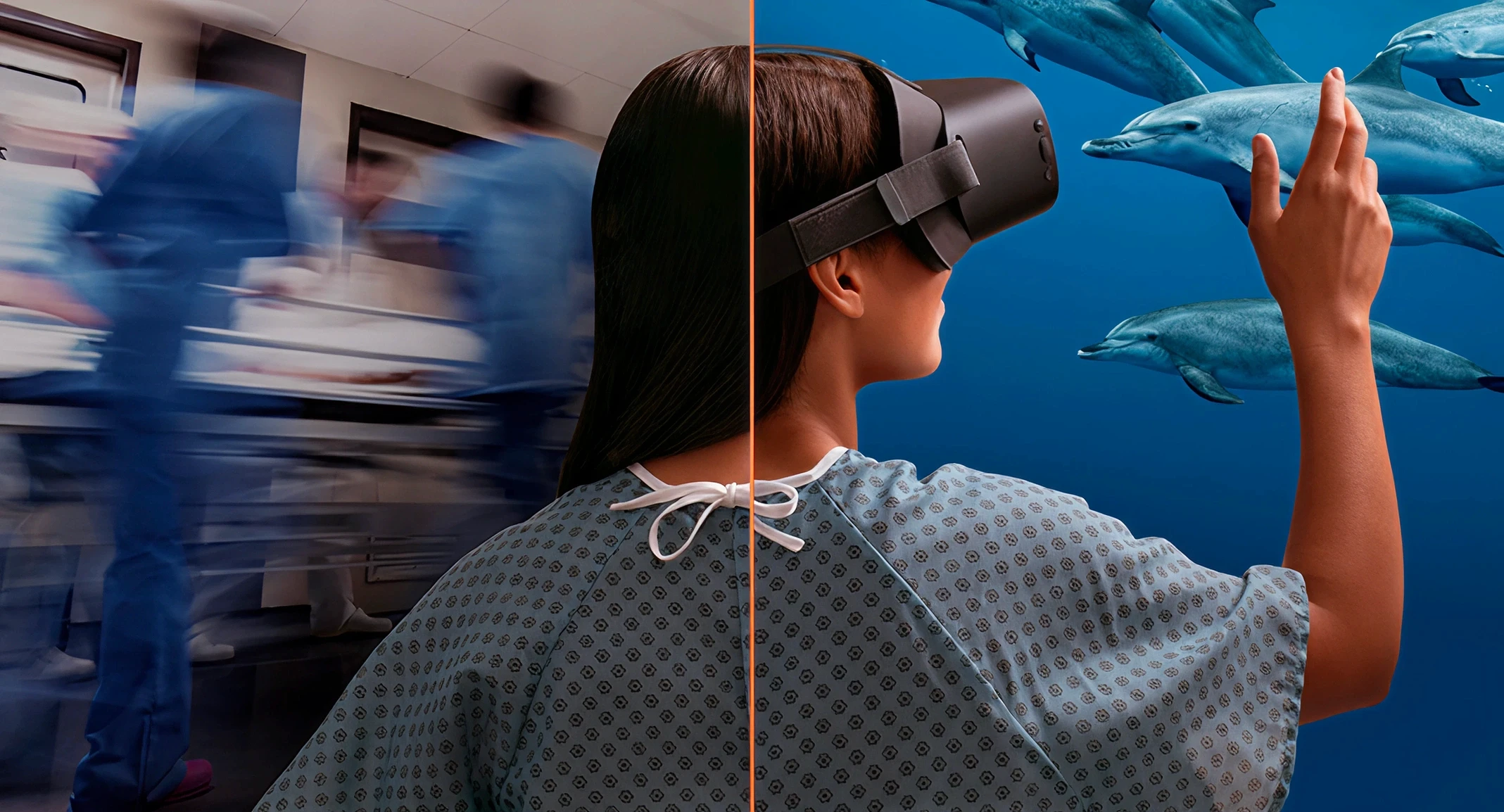

Virtual Reality (VR) removes the real world entirely and replaces it with a computer-generated environment. The clinician or patient sees only the virtual space. In healthcare, that full removal is the therapeutic or training mechanism: a surgical resident practicing a laparoscopic procedure is fully inside the simulation, a stroke patient relearning motor function is inside a gamified rehabilitation environment, and a PTSD patient confronting a fear is inside a controlled exposure environment where the therapist manages intensity in real time. VR is the right technology when the goal requires complete attention inside the virtual world.

Augmented Reality (AR) keeps the real world in view and adds a layer of digital information on top of it. A surgeon wearing AR glasses sees both the patient on the table and a holographic overlay showing vessel locations, implant placement guides, or navigation cues. The physical environment is not replaced; it is enhanced. AR is the right technology when the clinician must remain physically present and visually engaged with the real environment, and needs additional information layered into their field of view.

Mixed Reality (MR) takes AR further by allowing virtual objects to interact with the physical environment in real time. A holographic anatomy model can sit on a real table and be manipulated by real hands. A virtual surgical overlay can register precisely to a patient's body surface and track movement. MR is the most spatially precise of the three and the right technology when virtual and physical elements need to interact, not just coexist.

Extended Reality (XR) is the umbrella term covering VR, AR and MR. When a healthcare company evaluates an XR program, they are typically evaluating some combination of all three. Some researchers use the phrase Medical Extended Reality (MXR) to distinguish clinical deployments from consumer use.

Medicine is fundamentally a three-dimensional discipline. The heart does not sit on a flat page. A tumor's relationship to surrounding blood vessels cannot be fully understood in two dimensions. The sequence of a surgical procedure, the pathway of a nerve, the mechanism of a drug binding to a protein target are all spatial problems.

XR is the first medium that matches the actual dimensionality of the subject matter. A medical student learning cardiac anatomy in VR does not rotate a diagram on a screen; they walk around a beating heart at room scale, reach into the chambers, and observe blood flow as a dynamic spatial event. A surgeon planning a tumor resection in AR examines the patient's own three-dimensional anatomy derived from their imaging data, not a generic atlas. A medicinal chemist designing a drug candidate in VR inhabits the molecular structure, observing how the candidate fits the protein binding pocket from any angle and at any scale.

This spatial alignment between medium and subject matter is why XR works for medicine in ways that no previous visualization technology has matched. The human body is a spatial system. Biological processes, from cell division and immune response to cardiovascular dynamics and neural signaling, occur in three-dimensional space and across time. The ability to represent, manipulate, and interact with these processes in their native dimensionality changes both how quickly they can be learned and how precisely they can be planned, simulated, and communicated.

The practical consequences are significant. Procedural steps that take years to internalize through 2D reference material can be experienced spatially and repeated at no marginal cost. Surgical plans that required interpretation of flat cross-sections can be walked through in three dimensions before the first incision. Patient education that previously required a clinician to describe a complex physiological process verbally now allows the patient to see their own anatomy, observe what is happening, and understand their condition experientially rather than conceptually.

How is XR Used in Healthcare?

XR in healthcare is used across several distinct use cases:

Medical training and simulation

Surgical planning and intraoperative guidance

Virtual reality therapy and rehabilitation

Medical device visualization

Drug discovery

Remote emergency support and surgical telementoring

Patient education and informed consent

Robotic surgery and teleoperation

Medical Training and Simulation

VR, AR and MR are used in medical training to let clinicians practice procedures, build clinical decision-making skills, and develop muscle memory in simulated environments before working with real patients. It is the most clinically validated use of XR in healthcare.

VR surgical training allows residents to rehearse laparoscopic techniques, orthopedic procedures, and minimally invasive interventions in photorealistic environments with haptic feedback. Medtronic's Touch Surgery platform is the largest real-world proof point for the scale of adoption: 2.5 million active users, 400+ validated procedures across 17 specialties, integration into more than 100 US residency programs, and validation in over 30 peer-reviewed publications at institutions including Stanford Hospital and Mount Sinai.

Beyond surgery, VR medical simulation covers emergency response, anesthesia, nursing procedures and behavioral health assessment. Medical students can manipulate detailed 3D anatomical models, explore organ systems from any angle and repeatedly examine virtual structures at no marginal cost. CellWalk, available on iPad and Apple Vision Pro, extends this into the molecular level, letting students walk through bacterial cells and explore cellular structures in spatial computing environments. A 2025 systematic review by PMC found measurable improvements in anatomy learning outcomes among students using VR compared to traditional methods.

Nursing and healthcare workforce training is another high-volume application. PwC's study "Understanding the Effectiveness of VR Soft Skills Training in the Enterprise" found that VR learners completed training four times faster than classroom learners and were 275% more confident applying what they had learned. One in three employers who have used VR training describe it as more effective than any other training modality, according to PwC's XR healthcare research.

Surgical Planning and Intraoperative Guidance

AR in surgical planning reduces surgical risk by allowing teams to rehearse a specific patient's procedure on that patient's own imaging data before the first incision. Patient-specific 3D models built from CT and MRI data are rendered in AR, allowing surgeons to walk through anatomical landmarks, plan incision paths, and identify risk areas in advance.

In the operating room, AR intraoperative guidance overlays preoperative imaging directly onto the surgical field. In orthopedic surgery, AR navigation has improved implant placement accuracy. In neurosurgery and hepatobiliary procedures, where stable anatomical structures make overlay registration reliable, AR has shown particular promise.

A 2026 systematic review in Frontiers in Surgery analyzed 30 studies and 1,270 participants. AR reduced pedicle screw placement errors from 8.3% to 2%, cut spinal screw placement time by 50%, and VR prompted surgical plan modifications in 32 to 52% of cases.

AR surgical heads-up displays sit at the intersection of AR and surgical workflow: real-time data from imaging systems, patient vitals, and navigation software displayed within the surgeon's field of view, without requiring them to look away from the operative field. Companies building Apple Vision Pro surgical applications are actively developing this category.

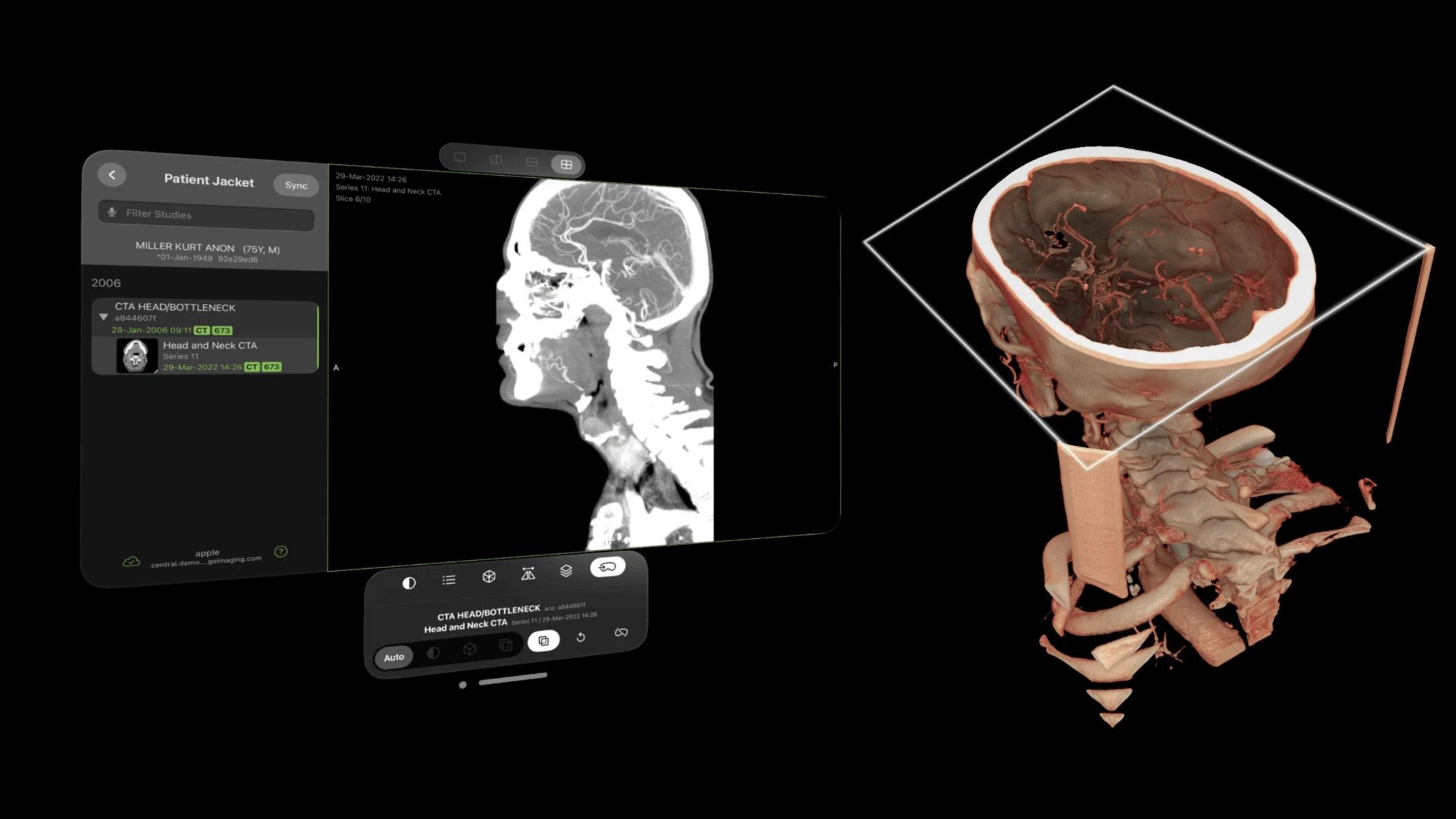

Visage Imaging's spatial radiology platform for Apple Vision Pro is one example: radiologists and surgeons can review PACS imaging hands-free in immersive spatial environments, reducing the friction of switching between imaging systems and the surgical field.

AR also enables remote surgical collaboration. Specialists in different locations can view the same holographic 3D model of a patient's anatomy, annotate it, and guide local teams through complex interventions.

Vascular visualization is another active area. Surgeons performing complex vascular repairs can use AR overlays to see vessel anatomy during the procedure, reducing the need for repeated fluoroscopy and the radiation exposure that comes with it.

Virtual Reality Therapy and Rehabilitation

Virtual reality therapy has the strongest clinical evidence base in healthcare and is where insurance reimbursement has begun. Pain management, neurological rehabilitation, mental health, and patient care applications each have distinct evidence profiles.

Virtual Reality Pain Management

VR distraction is one of the most consistently validated applications in clinical research. Immersive VR environments reduce perceived pain by redirecting attention away from painful stimuli, with evidence across burn wound care, chemotherapy infusions, chronic lower back pain, and procedural pain in pediatric patients. RelieVRx is now FDA-authorized as the first in-home VR treatment for chronic lower back pain and was the first immersive therapeutic to receive CMS valuation. The AMA approved the first CPT code for virtual reality therapy in 2022, according to the Information Technology and Innovation Foundation.

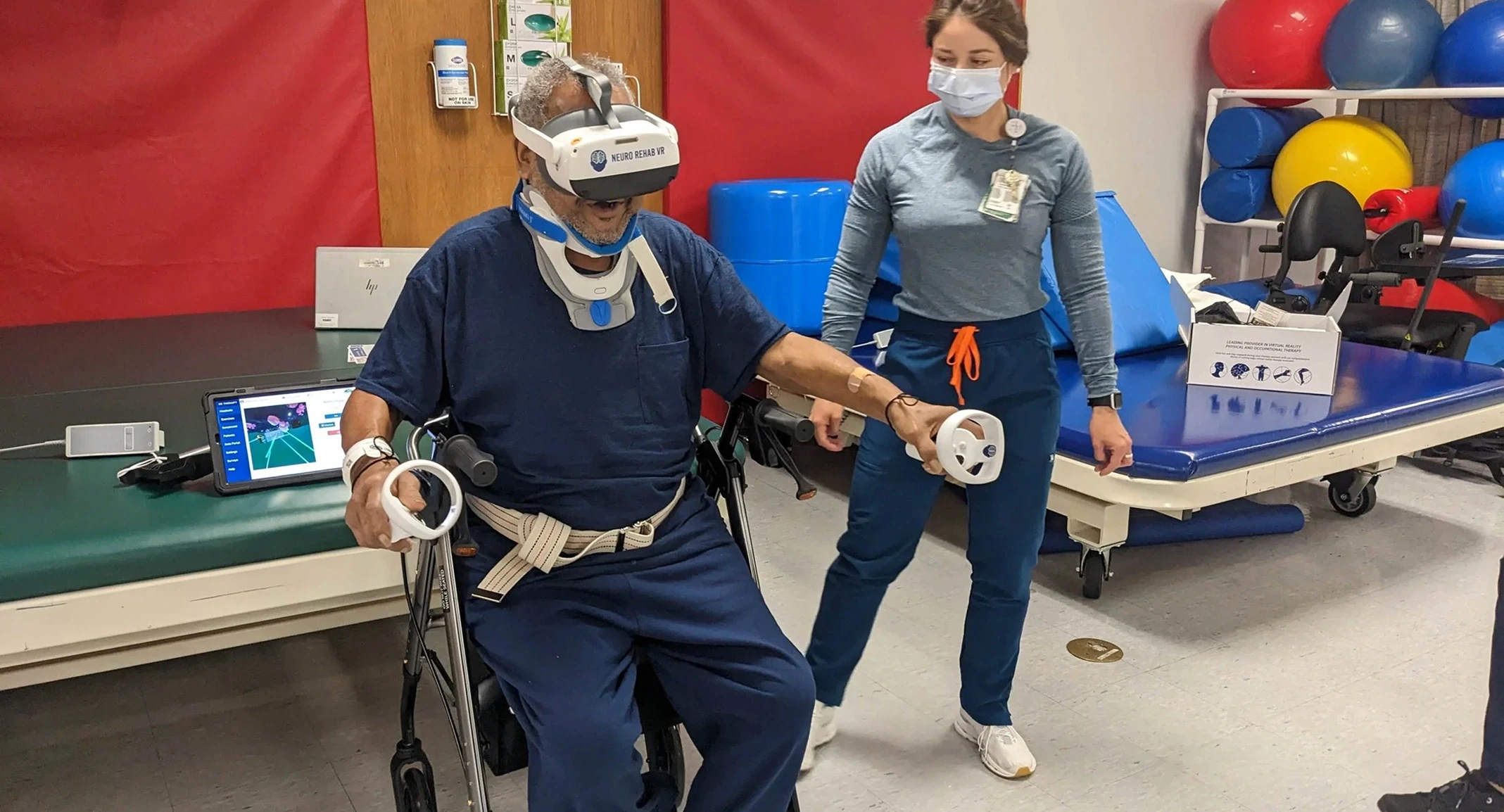

Virtual Reality Rehabilitation for Neurological Recovery

A 2024 systematic review covering 55 RCTs and 2,142 stroke patients found VR outperformed conventional therapy across upper limb motor function, functional independence, quality of life, spasticity, and dexterity. A 2025 network meta-analysis found fully immersive VR produced the greatest gains in gross motor function. The gamified nature of VR rehabilitation improves compliance: patients sustain higher engagement when repetitive motor exercises are embedded in an interactive virtual environment.

Virtual Reality Therapy for Mental Health and Exposure Therapy

VR-based exposure therapy allows patients to confront feared stimuli in a controlled, graded environment without requiring access to the real triggering situation. A patient with a fear of heights does not need to stand on a rooftop. A veteran with PTSD does not need to return to a conflict zone. The therapist maintains full control of intensity and pacing throughout the session. Decades of RCT evidence for exposure therapy transfer directly to VR-delivered formats, as documented in a comprehensive 2024 review of immersive technologies in healthcare.

VR for Patient Care: Dementia, Pediatrics and Palliative Care

VR calming environments reduce agitation in dementia patients. VR distraction is used during painful pediatric procedures. Palliative care programs use VR to give terminally ill patients access to immersive experiences they could not otherwise have: nature environments, travel, family moments. Removing the real world entirely is what makes it therapeutic in these contexts.

Virtual Reality ICU and Critical Care Rehabilitation

VR supports early mobilization in ICU patients by providing engaging motor and cognitive stimulation in a controlled environment, even under restricted-mobility conditions. Published research shows high patient acceptance rates with no adverse events attributable to VR in ICU settings.

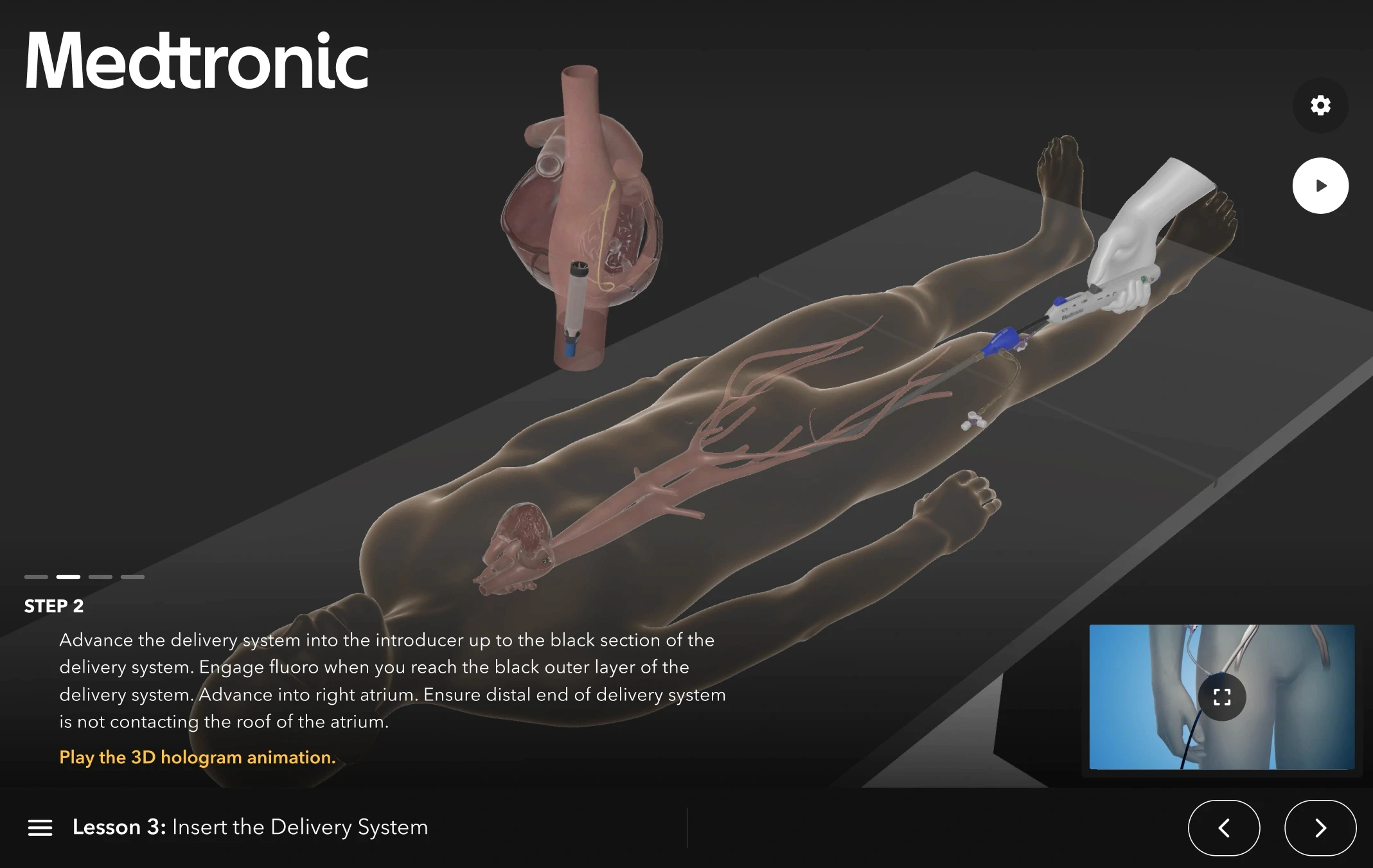

Medical Device Visualization

AR for medical device visualization is one of the fastest-growing use cases driven by medical device companies. AR overlays give sales teams, surgeons and trainees a spatial understanding of implant geometry, placement technique, and therapy mechanisms that flat media cannot provide.

The applications break into three categories:

Sales and training: AR overlays let surgeons visualize how a new implant or device fits within a patient's anatomy before committing to a procedure

Intraoperative guidance: AR assists with implant positioning in real time, with the device's correct orientation rendered as a spatial overlay on the surgical site

Cadaver lab replacement: device companies deploy realistic AR simulations that replicate the visual and spatial experience of using the device, reducing the need for physical cadaver labs

Medtronic's MCXC division is one of the most active producers of AR medical device applications. The Micra XR Trainer uses AR combined with a HoloLens companion application to let users interact with spatial visualizations of the Micra leadless pacemaker and build a stronger understanding of the therapy than conventional presentations allow. The XRverse by Medtronic platform extends this model across Medtronic's broader device portfolio, delivering therapy awareness experiences in AR that users can explore from any vantage point.

Both applications were built by Treeview. The core technical challenge in medical device AR is making spatial overlays feel clinically credible rather than decorative. When a cardiologist examines a leadless pacemaker in AR, the spatial accuracy of the overlay and the fidelity of the device model carry the entire experience. A millimeter of misregistration or a device model that does not reflect real manufacturing tolerances breaks the clinical utility.

Drug Discovery

VR molecular visualization shortens drug discovery timelines by giving medicinal chemists an immersive 3D environment to design and test drug-protein interactions in real time, replacing flat 2D screens and enabling cross-location collaboration that conventional tools cannot replicate.

The core problem XR solves in drug discovery is spatial. Chemists working from 2D representations are making three-dimensional judgments about bond angles, steric clashes, and binding dynamics without being able to inhabit the structure. VR changes that: researchers walk around molecules at room scale, reach inside them, and co-design with remote colleagues from the same virtual viewpoint. Platforms like Nanome connect directly to simulation and informatics tools, mapping live data onto the structure during the session.

Beyond molecular design, XR applies across the pipeline:

VR improves clinical trial participant comprehension before consent

AR overlays process instructions onto manufacturing equipment to reduce costly operator errors

XR simulates production environments for regulatory compliance training without disrupting live lines, a growing practice across pharmaceutical manufacturers

With drug development averaging $515 million per approved drug when factoring in the cost of failures, and timelines of 10 to 15 years per compound, any technology that reliably compresses timelines or reduces failure rates delivers a return that justifies serious investment.

Remote Emergency Support and Surgical Telementoring

AR and MR close the specialist access gap in emergency medicine by transmitting a live view of the surgical or emergency field to a remote expert who can annotate, draw, and guide in real time over the same shared visual space.

Applications include AR-assisted emergency triage in field medicine, remote guidance for minimally invasive procedures in low-resource settings, and specialist telementoring of surgical residents during complex cases. The COVID-19 era demonstrated this at scale: MR collaboration tools were used to extend specialist access across institutions without physical travel.

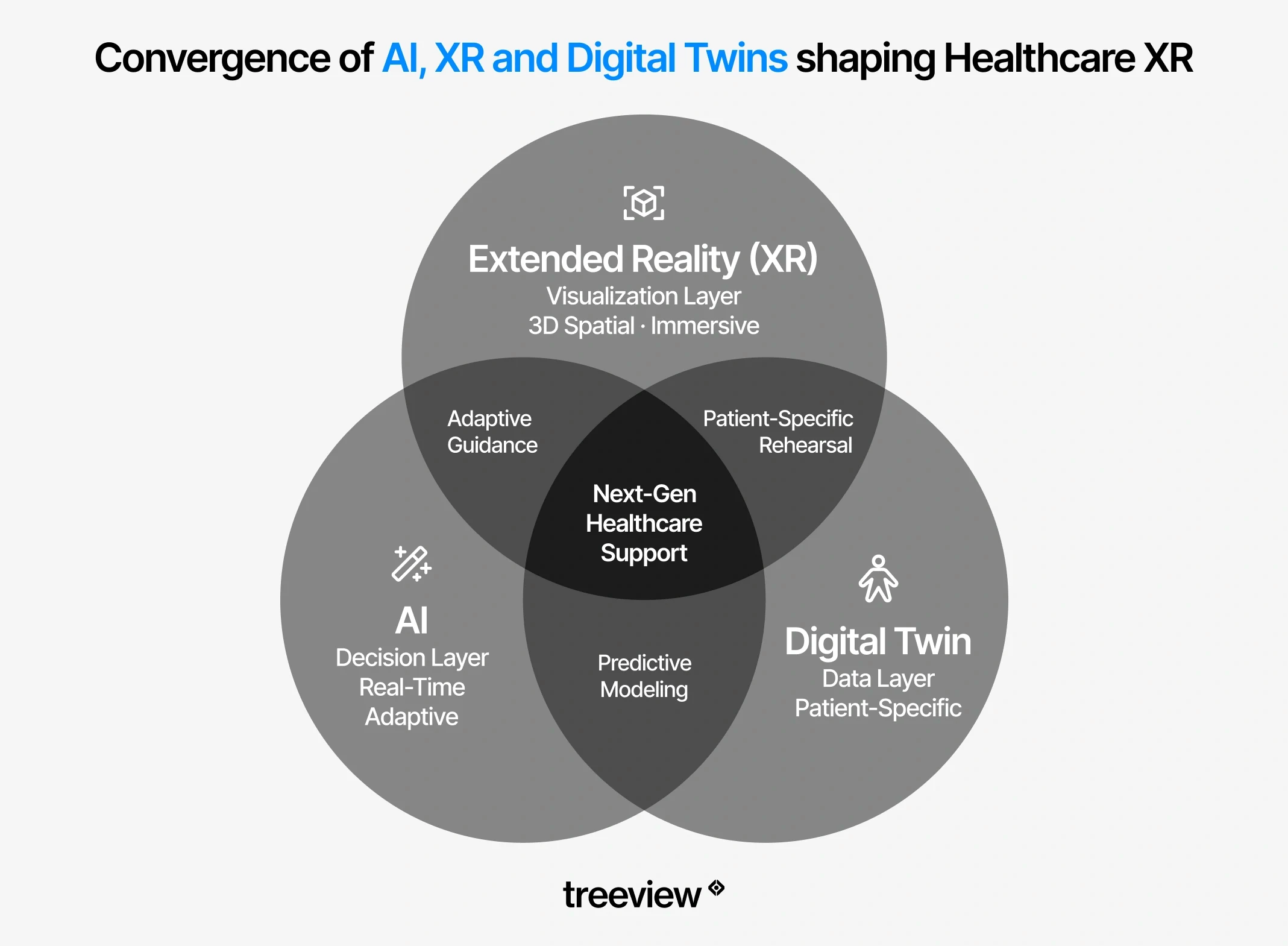

Digital Twins in Healthcare

Digital twins use XR as the visualization and interaction layer, rendering patient-specific models in AR or VR so clinicians can examine, manipulate, and rehearse procedures on data that reflects the actual patient rather than a generic anatomical reference. The models update in real time as the physical counterpart changes, enabling surgical rehearsal, hospital simulation, and continuous patient monitoring that would be impossible with static imaging.

In surgical planning, patient-specific human digital twins derived from CT and MRI data allow surgeons to rehearse a procedure on an accurate 3D model of that specific patient's anatomy before the first incision. This goes beyond generic anatomy education into patient-specific planning: the surgeon is rehearsing on this patient's vascular layout, this patient's bone geometry, this patient's anatomical variants.

In hospital operations, digital twins of facilities allow administrators to simulate patient flow, optimize ward layouts, and run emergency preparedness exercises in a virtual copy of the actual building. In clinical trials and patient monitoring, digital twins enable continuous, personalized simulation of a patient's physiological state, supporting drug dosage decisions and early detection of deterioration.

The convergence of XR and digital twin technology brings the immersive visualization layer and the accurate real-world model together. An AR overlay anchored to a patient-specific digital twin during surgery is more precise than an overlay anchored to a generic anatomical model, because the underlying data reflects that patient's actual anatomy.

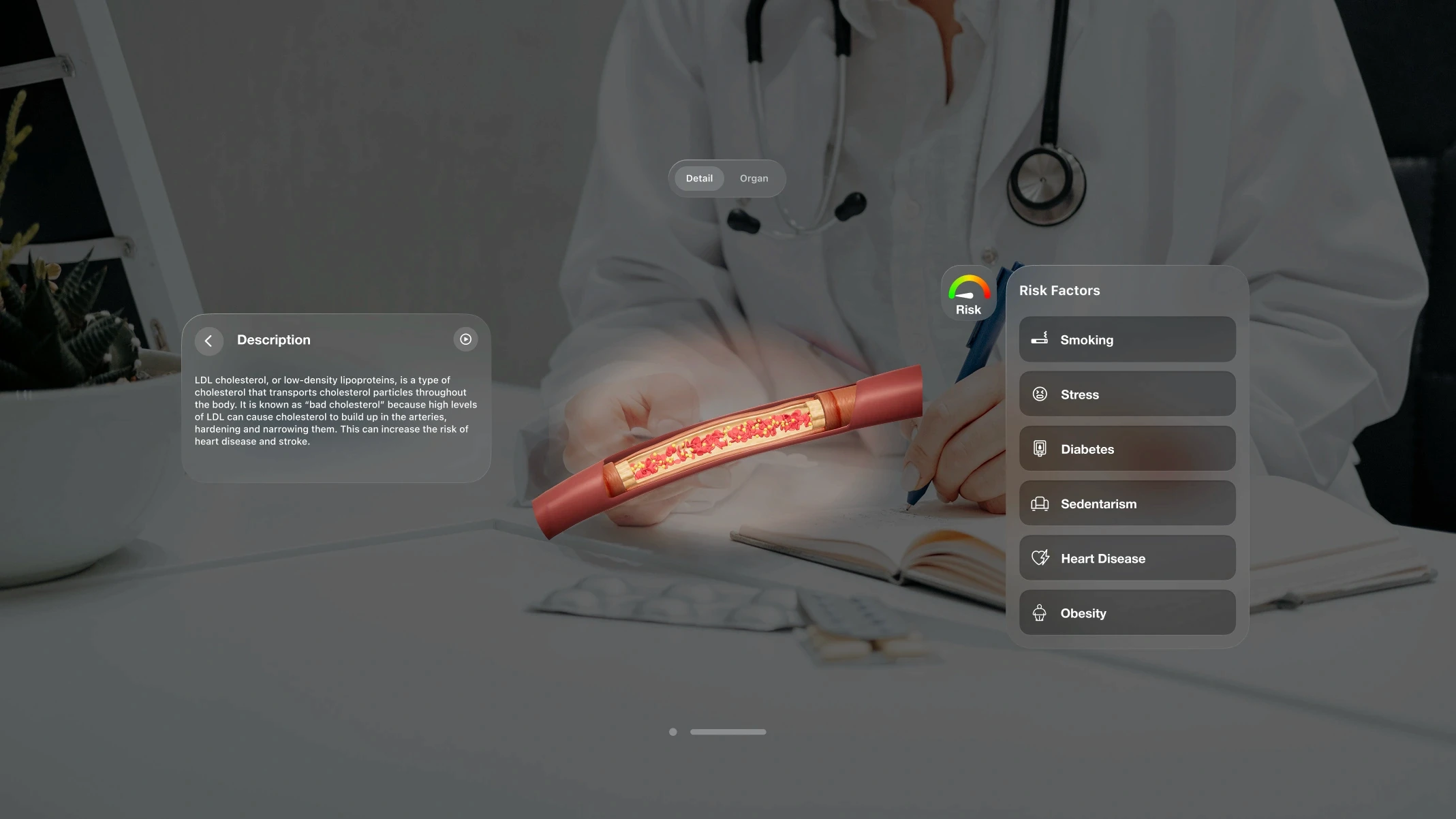

Patient Education and Informed Consent

VR for patient education reduces pre-procedure anxiety, improves informed consent comprehension, and increases treatment compliance. Full immersion places the patient inside a simulation of their own procedure or condition, producing an experiential understanding that a brochure or verbal explanation cannot match.

The diagnostic side of patient-facing VR is less discussed but equally promising. VisionWEARx, a platform Treeview built, uses VR to assist in the diagnosis of learning and neurodevelopmental disorders. The premise is that a controlled, instrumented VR environment can surface behavioral and perceptual patterns that standard clinical assessments miss or require multiple sessions to identify. It is an example of VR being used not to educate or treat, but to observe: generating clinical insight rather than delivering it.

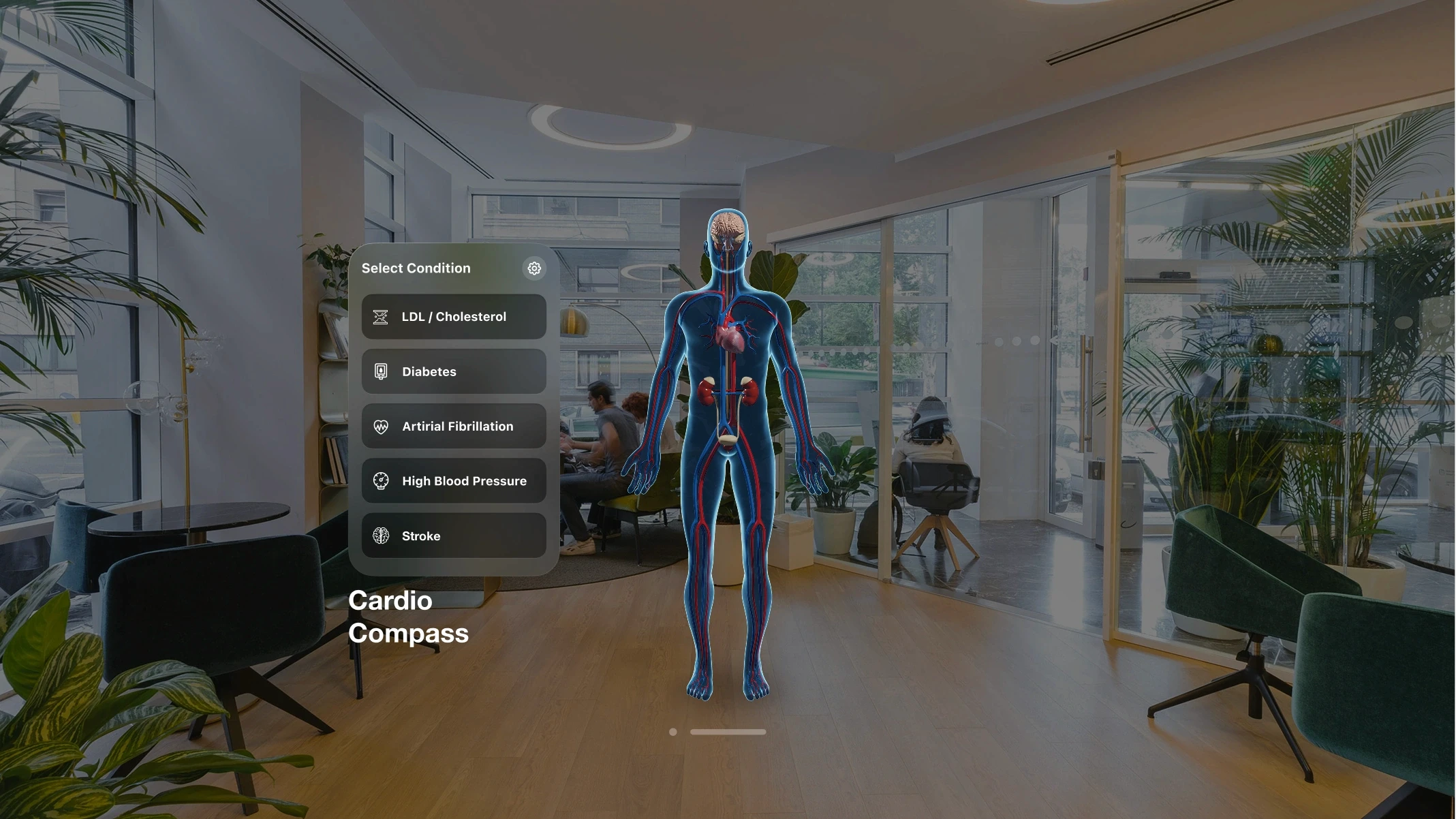

Patient education also extends beyond procedural preparation into chronic disease management. CardioCompass, built by Treeview for Daiichi-Sankyo, helps patients understand how lifestyle choices affect LDL cholesterol, diabetes, atrial fibrillation, stroke, and hypertension across iOS, Android, Meta Quest, Apple Vision Pro, and WebGL, giving clinicians and patients a shared visual language for conversations that are notoriously hard to have.

Abbott and Blood Centers of America deployed a mixed reality blood donation experience to engage first-time donors. The MR experience immerses donors in an interactive environment designed to reduce the anxiety and hesitation that prevent first-time donors from completing a donation. It is an example of XR being applied to patient and public engagement beyond the clinic, at the point of behavioral change that determines whether a person enters the healthcare system at all.

Robotic Surgery and Teleoperation

XR for robotic surgery and teleoperation is an emerging category where MR overlays improve surgeon control and situational awareness during robot-assisted procedures, and VR interfaces are being explored for full teleoperation, placing a surgeon in an immersive environment that mirrors the robot's physical viewpoint at a remote location.

The limitations of current robotic surgery systems are partly spatial: surgeons operate from bulky consoles that reduce their awareness of the patient and limit the range of implementation scenarios. MR is being developed as an alternative control interface.

Research demonstrated a HoloLens 2-based teleoperation system for the da Vinci Research Kit that uses hand gestures, head motion tracking, and speech commands to control the robot from within a mixed reality environment, showing comparable performance to manipulator-based teleoperation for endoscope navigation tasks, with instrument teleoperation identified as the area requiring further development in gesture recognition and video display quality.

For XR specifically, MR overlays are being integrated at two points in the robotic surgery workflow. Preoperatively, patient-specific anatomy rendered in MR gives surgeons a spatial walkthrough of the operative site before positioning the robot.

Intraoperatively, MR overlays provide real-time anatomical context within the surgeon's field of view during the procedure, reducing reliance on 2D monitor feeds and improving spatial awareness of instrument position relative to critical structures. Intuitive Surgical, Medtronic's Hugo RAS system, and research programs at institutions including Johns Hopkins and Duke University are actively developing XR interfaces for robotic surgery.

The core challenge remains latency: teleoperation requires sub-millisecond feedback loops that current network infrastructure does not reliably provide outside controlled environments. As 5G and edge computing infrastructure matures, remote robotic surgery with XR guidance is expected to move from landmark demonstrations to routine clinical deployment.

Does XR Work in Healthcare? The Evidence

The evidence for XR in healthcare is strong for VR surgical training, virtual reality pain management, virtual reality stroke rehabilitation and virtual reality therapy for anxiety and PTSD. For other applications, evidence is still developing.

Application | Evidence Level | Key Finding | Source |

|---|---|---|---|

VR surgical training | Strong (multiple RCTs) | 2.5M active users; validated in 30+ peer-reviewed publications; 100+ US residency programs | Touch Surgery / Medtronic; PMC 2024 |

Virtual reality pain management | Strong (consistent across studies) | Significant pain reduction in burn care, chronic back pain, procedural pain | ITIF 2025; PMC 2024 |

VR stroke rehabilitation | Strong (55 RCTs + 2025 network meta-analysis) | Statistically significant gains in upper limb motor function vs. conventional therapy; fully immersive VR greatest for gross motor | BMC Med Informatics 2024; Frontiers in Neurology 2025 |

Virtual reality therapy for anxiety, phobia and PTSD | Strong (decades of RCTs) | Exposure therapy via VR shows significant effect sizes across meta-analyses | PMC 2024 |

Virtual reality medical training | Strong (enterprise study) | 4x faster completion; 275% confidence improvement vs. classroom | PwC 2022 |

AR intraoperative guidance | Strong (growing body) | AR reduced pedicle screw errors from 8.3% to 2%; 50% faster spinal screw placement | Frontiers in Surgery 2026; PMC 2024 |

AR surgical planning | Strong (30 studies, 1,270 participants) | VR prompted plan modifications in 32-52% of cases; AR reduced placement errors to 2% vs 8.3% control | Frontiers in Surgery 2026 |

Virtual reality anatomy education | Promising (early studies) | Measurable learning outcome improvements; design heterogeneity limits conclusions | Anat Res Int 2025 |

Digital twin surgical planning | Emerging | Patient-specific rehearsal validated in neurosurgery; broader RCT data limited | PMC 2024 |

Patient education / consent | Emerging | Positive anxiety reduction and comprehension signals; outcome data limited | PMC 2024 |

Where the evidence is strongest

VR pain management, surgical training, and neurological rehabilitation are backed by multiple RCTs and systematic reviews. The evidence for exposure therapy in mental health conditions predates VR and has been successfully transferred to immersive virtual environments. For workforce training, PwC's enterprise VR study remains the most-cited benchmark, though it covers general soft skills training rather than clinical-specific tasks, an important distinction to maintain when presenting these numbers in a healthcare context.

Where the biggest gaps remain

A major systematic review identifies the most significant evidence gaps as implementation models, user acceptance research, and empirical evaluation of long-term patient outcomes. Benefits observed in controlled research settings do not automatically transfer to real-world clinical practice. Most published studies measure efficacy under ideal conditions rather than effectiveness in routine clinical deployment.

For specific applications, XR in healthcare has moved well past proof-of-concept. For others, it is still in structured pilot territory. The difference matters when making purchasing and deployment decisions.

Top AR/VR/XR Companies in Healthcare

The top VR and AR companies in healthcare include Medtronic's Touch Surgery, Surgical Theater, Visage Imaging, Nanome, Transfr, CellWalk and Treeview. The ecosystem spans dedicated medical XR platforms, hardware manufacturers and custom development studios.

Dedicated Medical XR Platforms

Touch Surgery is Medtronic Digital Surgery's flagship platform and one of the most comprehensive surgical training and analytics ecosystems in the world. It is composed of four integrated products that span pre-operative training through post-operative analysis, including the Touch Surgery Live Stream, a secure real-time surgical broadcasting that creates a virtual learning environment, giving remote surgeons direct visibility into expert technique without requiring physical presence in the operating room.

Surgical Theater builds AR-based surgical planning and navigation software used in neurosurgery and complex cardiovascular cases. It allows surgeons to rehearse a specific patient's procedure using that patient's own imaging data before entering the operating room, combining preoperative simulation with intraoperative AR guidance.

Visage Imaging is a radiology imaging platform that has extended its PACS (Picture Archiving and Communication System) to Apple Vision Pro, enabling hands-free, immersive review of CT, MRI, and other diagnostic imaging in spatial computing environments. It is directly relevant to pre-operative planning workflows where surgeons need to examine patient imaging before entering the operating room.

Nanome provides a VR molecular visualization platform used in drug discovery and pharmaceutical research. Nanome running on Meta Quest has replaced flat-screen molecule design in leading research charities and pharmaceutical institutions, accelerating collaborative design and shortening lead optimization timelines.

Transfr offers a VR Health Science training product that simulates clinical environments for healthcare career training. The platform places learners inside a virtual healthcare clinic to practice patient interactions, clinical assessments, and procedural skills, targeting healthcare workforce development programs in secondary education and vocational training settings.

CellWalk is an interactive 3D cellular biology platform for iPad and Apple Vision Pro that lets students explore bacterial and molecular structures at nanoscale in spatial computing environments. Used in medical and science education, it represents a category of immersive anatomy and biology tools that extend beyond human anatomy atlases into cellular and molecular visualization.

Development Studios Building Custom Healthcare XR

Organizations with specific clinical workflows often require custom applications. Generic training platforms cannot replicate a proprietary device, a specific clinical protocol, or a particular facility's layout.

On the custom XR development side, Treeview works with medical device companies, pharma, and healthcare startups building applications that off-the-shelf platforms cannot cover. Projects span surgical training simulations, AR therapy awareness tools, cardiovascular patient education, and clinical diagnostics, deployed across Meta Quest, HoloLens, Apple Vision Pro, Samsung Galaxy XR, iOS, Android and WebGL.

Healthcare clients include Medtronic MCXC (Micra XR Trainer, XRverse), Daiichi-Sankyo (CardioCompass, a multi-platform cardiovascular health simulation), VisionWEARx, a VR diagnostic platform for learning and neurodevelopmental disorders, and Inviewer, a spatial science simulation library available for Apple Vision Pro.

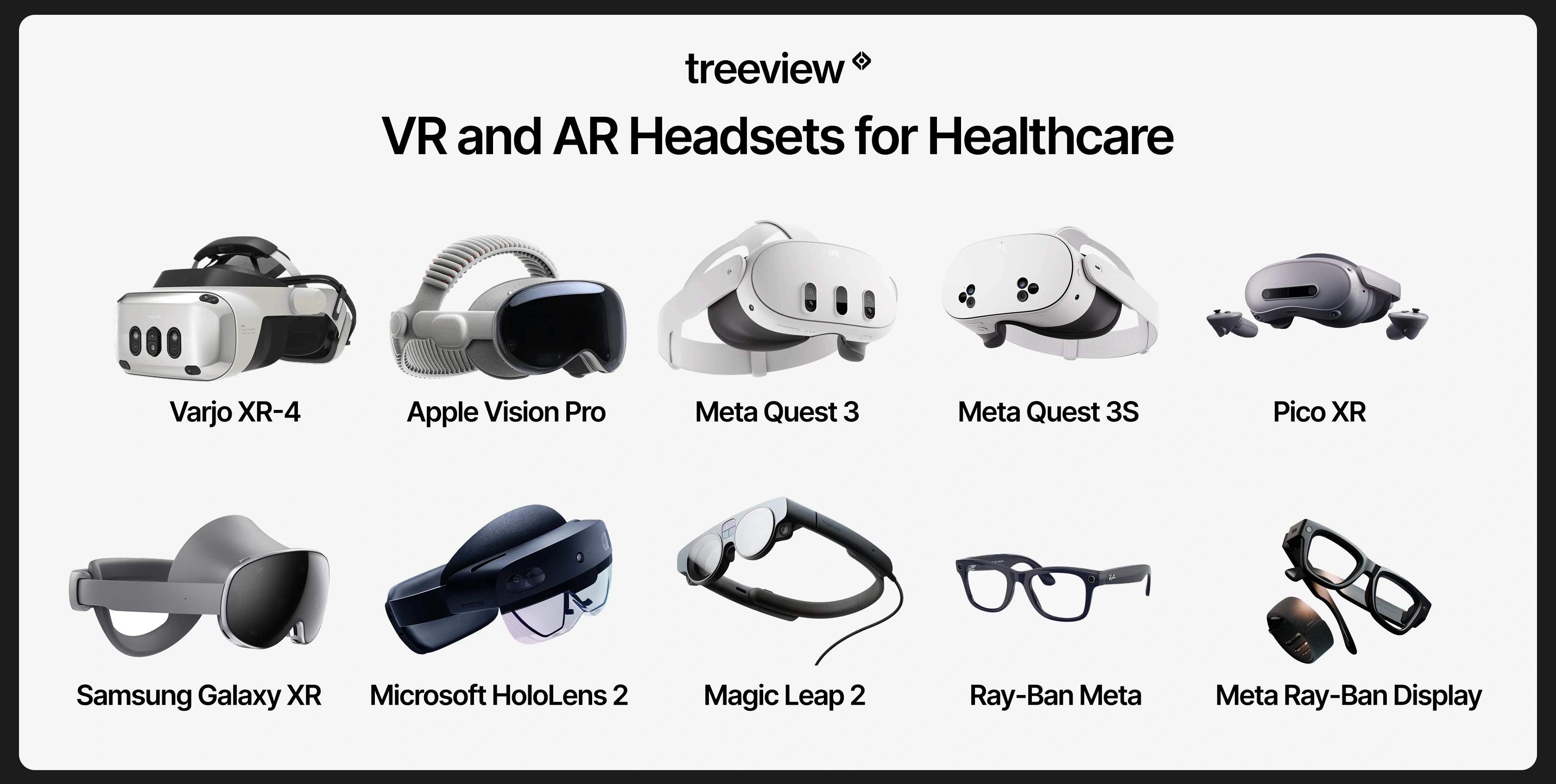

VR and AR Headsets for Healthcare

Hardware selection is one of the first practical decisions in any healthcare XR deployment. The choice affects total cost of ownership, the available software ecosystem for clinical XR headsets, and which clinical use cases are actually feasible. For most clinical training deployments, standalone headsets win on adoption.

Headset Comparison

Headset | Type | Best For | Key Strength | Limitation |

|---|---|---|---|---|

Meta Quest 3 | Standalone VR/MR | Training at scale, rehabilitation, patient education | Standalone, mature ecosystem, MR passthrough, widest software library (10,000+ apps) | Not rated for sterile environments out of the box |

Meta Quest 3S | Standalone VR/MR | High-volume training deployments, budget-conscious programs | $299 price point; #1 selling console on Amazon US Q4 2024 | Lower resolution displays than Quest 3 |

Apple Vision Pro | Tethered spatial computer | Precision imaging review, spatial radiology, high-fidelity simulation | Highest display fidelity, eye/hand tracking; 75% enterprise buyer share; 50+ Fortune 100 orgs | Production scaled back in 2025; 85K units shipped; high cost |

Microsoft HoloLens 2 | Standalone AR/MR | Intraoperative guidance, hands-free clinical workflows, remote collaboration | Hands-free optical AR, spatial anchoring, validated in surgical and clinical settings | Discontinued for new orders; limited software updates going forward |

Magic Leap 2 | Standalone AR (optical see-through) | Safety-critical surgical environments, sterile field AR overlays | Optical see-through with dimming, designed for clinical and industrial environments | Smaller software ecosystem, higher cost than video passthrough alternatives |

Samsung Galaxy XR | Standalone VR/MR | AI-assisted clinical workflows, patient engagement | Android XR OS; Google AI integration; first viable third platform option alongside Meta and Apple | Early-adopter territory; limited healthcare software ecosystem at launch |

Varjo XR-4 | Tethered VR/XR (PC) | High-fidelity surgical simulation, defense and aviation-grade medical training | 51 PPD display resolution, 200 Hz eye tracking; highest visual fidelity commercially available | $3,990–$9,990 + $2,500/device/year subscription; requires high-end PC |

PICO 4 Enterprise | Standalone VR | Clinical trials, rehabilitation, EU and Asia deployments; enterprise after Meta's exit | 46% China VR market share; enterprise MDM support; Meta Feb 2026 enterprise exit creates opening | Smaller global software ecosystem than Meta Quest |

Meta Ray-Ban Display | Smart glasses (display + audio) | Heads-up information display, bedside workflows, remote consult | Wearable display in glasses form factor; part of Meta's 9M+ smart glasses shipped lifetime, including Ray-Ban Meta and Ray-Ban Stories | Small display FOV; not suitable for complex AR overlays |

Ray-Ban Meta Gen 2 | Smart glasses (AI + audio/camera, no display) | AI-assisted workflows, hands-free documentation, remote observation | 6.5M units in 2025 (+225% YoY); best-selling AI smart glasses in the world; Meta AI integration | No display; limited to audio output and camera capture |

Standalone vs. Tethered

Meta Quest is the dominant standalone platform, with 26.76 million lifetime units and 53% of the standalone headset market. The Quest 3S at $299 was the top-selling console on Amazon US in Q4 2024, making fleet deployment at clinical scale more financially viable than at any previous point. Standalone headsets lower the friction of actual use, which translates directly into adoption.

PICO holds approximately 46% of the China VR market and is the dominant standalone headset across much of Europe and Southeast Asia, including Germany, France, Japan, and Singapore. For healthcare organizations in those markets, PICO is the functional equivalent of Meta Quest. Meta's February 2026 enterprise exit has further strengthened PICO's international position.

Tethered headsets connect to a high-performance PC and deliver higher visual fidelity. For applications where visual precision is critical, such as high-resolution surgical simulation, tethered headsets remain relevant. Varjo's 51 PPD display resolution and 200 Hz eye tracking represent the current ceiling of commercial XR display technology.

Smart Glasses as an emerging category

Smart glasses shipped 7.25 million units in 2025, representing 50% of total XR volume for the first time. Ray-Ban Meta Gen 2 alone shipped 6.5 million units at a +225% year-over-year growth rate, making it the best-selling AI smart glasses product in the world.

The revenue composition inside Meta Reality Labs has fundamentally shifted: in 2021 Quest hardware generated roughly $1.85 billion while smart glasses contributed $45 million. By 2025 those figures had reversed, with Quest hardware at $660M and smart glasses at $2.15B. For healthcare, the practical implication is that Meta's revenue shift suggests development investment is moving toward the smart glasses category rather than headsets alone.

Most current smart glasses have not yet reached the fidelity required for demanding clinical AR applications, but the trajectory and the volume make the category impossible to ignore. Early validated clinical use already exists: a 2025 peer-reviewed study at Adventist Health White Memorial Hospital and USC integrated Ray-Ban Meta smart glasses into a sterile surgical workflow for limb preservation surgery, and AI-augmented smart glasses deployments have produced measurable reductions in procedure times and surgical errors across hospital networks. The Future of XR in Healthcare section below covers these applications in more depth.

XR Healthcare Regulations and Insurance Reimbursement

Most XR healthcare applications are not yet reimbursable. Virtual Reality Therapy has its own CPT billing code as of 2022, and FDA clearance is required for products making clinical claims.

Reimbursement in the United States

In 2022, the American Medical Association approved the first CPT code specifically for virtual reality therapy. The Centers for Medicare and Medicaid Services has begun valuing specific VR therapeutic applications. RelieVRx, now FDA-authorized as the first in-home VR treatment for chronic lower back pain, was the first immersive therapeutic to receive CMS valuation, establishing a meaningful precedent for other VR therapy products seeking reimbursement.

Reimbursement still covers a narrow set of validated applications. The majority of VR and AR uses in clinical settings are not yet reimbursable, which means costs are absorbed by the hospital, the training program or the patient directly. A scoping review found that the lack of insurance reimbursement was cited among the barriers to systemic adoption, though it appeared less frequently than operational and organizational factors.

Reimbursement Outside the United States

Germany has the most advanced XR reimbursement framework outside the US. The DiGA (Digitale Gesundheitsanwendungen) fast-track pathway, established in 2020 under the DVG law, allows digital health applications including VR therapeutics to receive statutory health insurance reimbursement after demonstrating positive care effects. Several VR-based digital therapeutics have already been listed on the DiGA directory, making Germany a leading market for reimbursed XR therapy in Europe.

In the United Kingdom, NHS England has run active XR pilots across rehabilitation, surgical training, and mental health, and the NICE evidence framework is beginning to accommodate digital therapeutics. Japan's Ministry of Health, Labour and Welfare (MHLW) has approved digital therapeutics under its SaMD framework, and Singapore's Health Sciences Authority (HSA) applies a risk-based classification system for software medical devices that parallels FDA SaMD pathways. Canada's Health Canada regulates XR medical devices under its Medical Devices Regulations, with requirements broadly similar to FDA SaMD classification.

FDA Regulation

The FDA classifies certain VR and AR healthcare applications as Software as a Medical Device (SaMD). Products making clinical claims, for example that a VR application reduces pain scores or assists surgical navigation, require FDA clearance or approval. The FDA has an active Medical Extended Reality program, and its April 2024 announcement on using virtual and augmented reality for home-based healthcare represents a further signal of regulatory engagement with the category.

For organizations evaluating off-the-shelf products, FDA clearance status is a critical due diligence question. For organizations building custom applications, the regulatory classification of the intended use determines whether FDA engagement is required before deployment.

Regulation Outside the United States

In the European Union, XR healthcare applications are regulated under the EU Medical Device Regulation (MDR 2017/745) and the In Vitro Diagnostic Regulation (IVDR). Software as a Medical Device is classified under the MDR's risk-based framework, with CE marking required before distribution across EU member states. Germany, France, and the Netherlands are among the most active EU markets for clinical XR adoption.

In the United Kingdom, the Medicines and Healthcare products Regulatory Agency (MHRA) regulates medical software under a framework aligned with but independent from the EU MDR post-Brexit. Japan's Pharmaceuticals and Medical Devices Agency (PMDA) has a dedicated digital health review pathway. Singapore's Health Sciences Authority (HSA) and Australia's Therapeutic Goods Administration (TGA) both apply risk-based SaMD classification consistent with the International Medical Device Regulators Forum (IMDRF) framework that underpins most national digital health regulation globally.

How to Implement XR in Healthcare: Common Challenges and Pitfalls

Most healthcare XR deployments that fail do so for operational reasons, not technical ones: headset management overhead, lack of clinical buy-in, poor EHR integration, and cost models that were never stress-tested. The pattern is consistent enough that these questions need to be on the table at the first scoping conversation, before any development begins.

One recurring dynamic worth naming: healthcare buyers who come from innovation teams tend to understand technology capabilities more quickly. Procurement and administrative buyers often need to see working examples before the scope of what is possible becomes concrete. Without demos or case studies that match the clinical context, early conversations stay abstract for too long on both sides.

The Hardware Maintenance Problem

Shared VR headsets in clinical environments need cleaning protocols, replacement accessories, firmware updates, and storage solutions. A hospital that deploys 20 headsets for a training program quickly discovers that headset management is its own ongoing operational task. This overhead is rarely factored into early-stage cost models.

Recommendation: Assign a named owner for headset fleet management before go-live, build replacement accessory costs into the procurement budget, and evaluate MDM (Mobile Device Management) platforms such as ArborXR that allow remote firmware updates, content deployment, and usage tracking across a fleet without requiring physical device access.

Cybersickness and Patient Suitability

Not all patients can use VR comfortably. Cybersickness affects a meaningful percentage of users, particularly in older populations and those with certain neurological or vestibular conditions. Contraindications are real and must be built into clinical screening protocols for any patient-facing VR application. Ignoring this leads to adverse experiences and program abandonment.

Recommendation: Build a brief screening protocol before any patient-facing deployment: exclude patients with active vestibular disorders, recent seizures, or severe motion sensitivity. For high-risk populations such as elderly or neurologically impaired patients, start with shorter sessions under five minutes, use comfort settings that limit artificial locomotion, and increase duration only as tolerance is confirmed.

Organizational Culture and Clinical Buy-In

Implementation research consistently identifies organizational culture as a key determinant of successful XR adoption. Clinicians who were not involved in evaluating or selecting a technology are less likely to use it, regardless of its quality. A scoping review found that staff attitude and motivation were among the most frequently cited facilitators of successful VR adoption in clinical settings. Decisions made at an administrative level, without clinical champion involvement, predictably underperform.

Recommendation: Involve at least one clinical champion in the evaluation process from day one. Run a structured pilot with a small group of willing clinicians before broader rollout, use their feedback to refine the workflow, and give them ownership of peer training. Adoption rates in clinical XR correlate strongly with whether staff feel the technology was chosen with them rather than deployed at them.

The same applies to content development. Every major healthcare organization has Subject Matter Experts (SMEs): doctors, clinical specialists, and device experts who should be embedded in the product team throughout the build, not brought in at the end for sign-off.

The SME's clinical eye catches levels of anatomical or procedural detail that are invisible to a generalist but immediately obvious to a practicing clinician. Medical animations are a specific example: showing an artery or a beating heart in a way that is clinically accurate requires understanding how it actually works. The content creation side of healthcare XR is as specialized as the engineering side, and treating it as standard 3D work is one of the most common ways quality breaks down.

Integration with Existing Clinical Systems

Healthcare IT environments are complex, regulated, and often legacy-constrained. An XR application that cannot connect to an EHR, authenticate through existing identity systems, or comply with data handling requirements creates friction that blocks adoption at scale. Custom XR development in healthcare requires genuine healthcare IT expertise alongside XR development expertise. Teams that have only built consumer or general enterprise XR applications consistently underestimate this layer.

Recommendation: Conduct a technical discovery session with your IT and clinical informatics teams before scoping any XR application. Identify the EHR vendor, available APIs (FHIR, SMART on FHIR, HL7), identity management system, and data classification requirements for any patient-linked data the XR application might capture. Addressing this at scoping prevents costly architectural rework after development has begun.

The Cost Question

High upfront hardware costs are frequently cited as a barrier, but the cost calculation is more nuanced than it appears. The right comparison is between VR training costs and the alternatives: cadaver lab time, clinical simulation center costs, and the cost of clinical errors made by undertrained practitioners. PwC's enterprise VR cost analysis found that VR training achieves cost parity with classroom training at 375 learners and with e-learning at 1,950 learners. When framed as a total cost analysis at scale, XR often performs well, but that analysis needs to happen explicitly before beginning the evaluation process.

Recommendation: Model the full three to five year total cost of ownership before presenting a business case: hardware purchase plus replacement cycle, software licensing, accessory replacement, staff time for fleet management, and any regulatory or validation costs. Compare this against the cost of the current training or treatment modality it replaces. The business case for XR is almost always stronger at scale than it appears in a single-year budget line.

Data Privacy and HIPAA Compliance

Patient-facing VR applications collect biometric and behavioral data. Gaze tracking, movement patterns, and physiological responses during VR sessions are sensitive. Healthcare organizations deploying XR need to address data handling, patient consent, and compliance with HIPAA and equivalent regulations before go-live.

For custom XR applications, HIPAA compliance is a development-side requirement, not an afterthought. It affects data storage architecture, transmission encryption, access controls, audit logging, and patient consent workflows. Development studios without prior healthcare experience frequently underestimate this scope. Any studio building a patient-facing or clinically adjacent XR application should be able to document how PHI (Protected Health Information) is handled, where it is stored, and what Business Associate Agreement (BAA) obligations apply before a line of code is written.

Recommendation: Determine whether your application will handle PHI at all. Training applications using synthetic patient data or anonymous scenarios typically do not trigger HIPAA requirements. Applications that link session data to identifiable patients do. Classifying PHI exposure correctly at the start determines infrastructure choices, vendor requirements, and development timeline.

For organizations outside the United States, equivalent frameworks apply: GDPR in the European Union places strict requirements on the processing of health data as a special category of personal data, with data protection impact assessments (DPIAs) required for high-risk processing. Japan's Act on the Protection of Personal Information (APPI) and Singapore's Personal Data Protection Act (PDPA) impose similar obligations. The compliance layer is different in each jurisdiction, but the principle is the same: data classification at the architecture stage determines everything downstream.

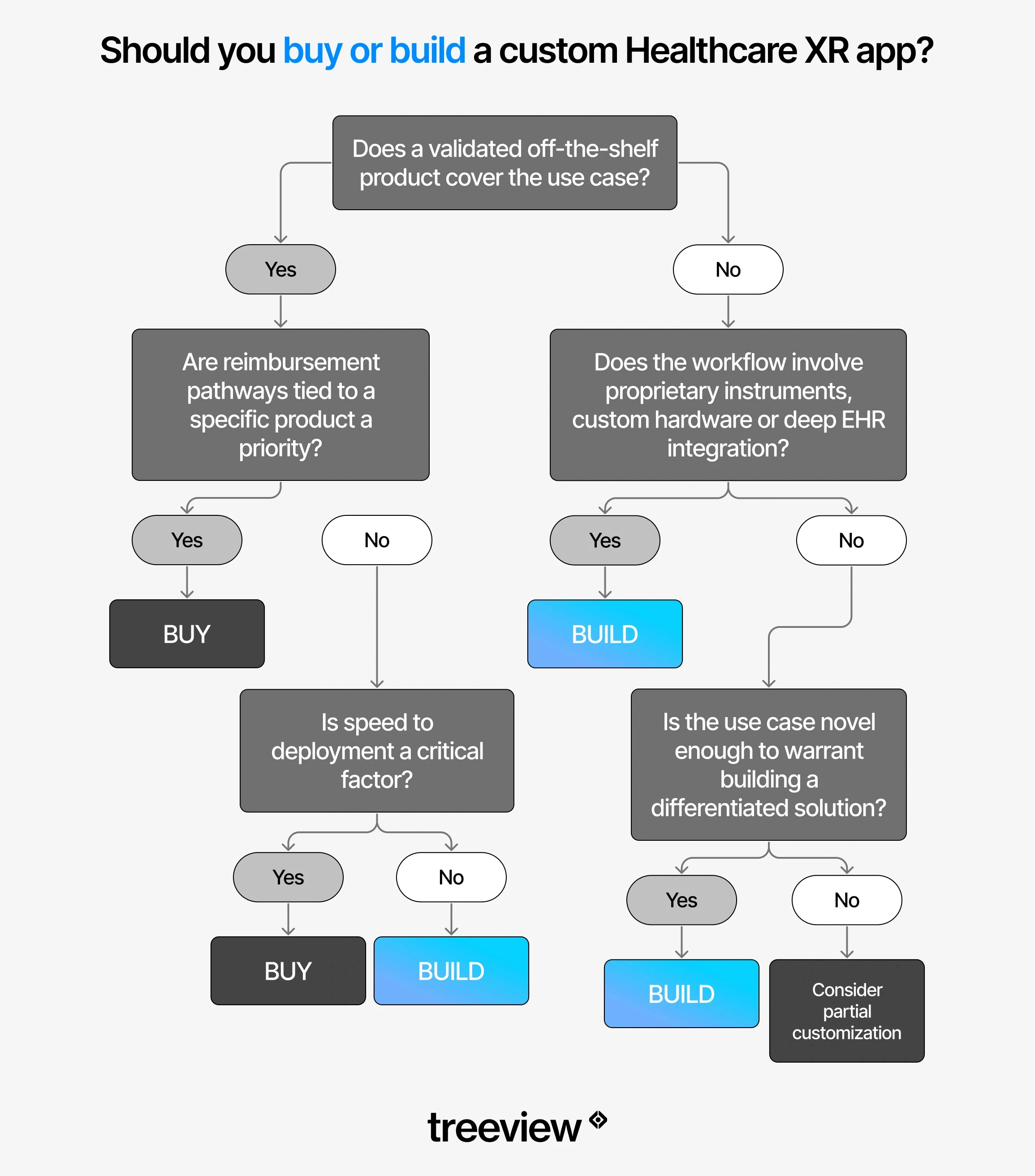

Build vs. Buy: Custom XR Development vs. Off-the-Shelf Healthcare XR

The build vs. buy decision comes down to whether a validated off-the-shelf product covers the use case. If the workflow involves proprietary protocols, custom hardware, or deep EHR integration, custom development is usually the right path.

When off-the-shelf makes sense

The use case is covered by a validated, FDA-cleared product

Speed to deployment matters and internal XR development capacity is limited

The application involves a standard clinical task (basic anatomy training, phobia therapy, pain distraction) where a generic solution is clinically sufficient

Reimbursement pathways tied to a specific product are a priority

When custom development makes sense

The workflow involves proprietary instruments, devices, or clinical protocols

The application requires integration with specific clinical systems or data sources

The use case is novel enough that no existing product covers it adequately

The organization needs to own the IP for long-term development and expansion

The scale of deployment justifies the development investment

When evaluating a development partner for healthcare XR, the questions that matter most are practical: how many healthcare clients have they worked with, what types of organizations, can you see the visual quality and clinical accuracy of past work, and do they have direct experience with the regulatory and compliance layer. Generic XR development experience does not transfer cleanly to healthcare. The clinical accuracy requirements, the SME collaboration process, the data handling requirements and the stakes are categorically different.

One technical decision that has an outsized impact on scalability is the choice of development engine. Unity's cross-platform architecture allows a single healthcare XR application to be deployed across standalone VR headsets (Meta Quest, PICO, Samsung Galaxy XR), tethered spatial computers (Apple Vision Pro, PlayStation VR), augmented and mixed reality devices (HoloLens, Magic Leap), mobile (iOS and Android), desktop (Windows, macOS, Linux), and web (WebGL) from one shared codebase.

In practice, this means a clinical training application built once can run in a simulation center on a high-fidelity tethered headset, on a standalone Quest in a ward, on a tablet during a bedside consultation and in a browser for remote learners, without requiring a separate build for each context. For healthcare organizations that need their XR applications to travel across departments, institutions, and patient touchpoints, this kind of platform reach matters from the first architecture decision.

The Future of XR in Healthcare

AI integration, lighter hardware, and expanding insurance reimbursement are the three forces shaping the near-term trajectory of XR in healthcare.

AI and XR are increasingly inseparable

Real-time AI is beginning to power adaptive surgical guidance, personalized rehabilitation programs that adjust to patient performance in real time, and clinical decision support overlaid directly into a surgeon's field of view. Spatial context tells AI where things are in three-dimensional space. Clinical AI tells the system what that means. Together, they produce capabilities that neither achieves independently.

Spatial computing development is moving faster in this direction than any other area of the field.

AI avatars inside XR environments are an early expression of this convergence. Customizable virtual avatars can guide patients through their conditions, treatment expectations, and therapy exercises in real time inside a VR session. Clinicians offload repetitive education tasks and focus on direct care. The avatar selects its appearance and behavior based on patient needs and condition type, from anxiety and PTSD to physical rehabilitation, and answers questions without requiring clinician presence.

Telehealth, remote monitoring and HMDs

Head-mounted displays (HMDs) are beginning to extend telehealth beyond video calls into spatial environments where clinicians and patients share a virtual space. Rather than a patient describing symptoms over a screen, a clinician can observe a patient performing a physical rehabilitation exercise in a shared virtual room, assess movement quality in real time and adjust the session remotely. This closes the gap between in-person clinical assessment and remote care in ways that flat video cannot.

For remote patient monitoring, XR headsets are beginning to function as persistent sensing platforms. Gaze tracking, movement patterns, reaction times and physiological responses recorded during VR therapy sessions generate continuous, longitudinal data on patient state that supplements periodic clinical assessments. A patient doing VR stroke rehabilitation at home three times a week is generating a richer motor recovery dataset than a patient seen in a clinic once a month.

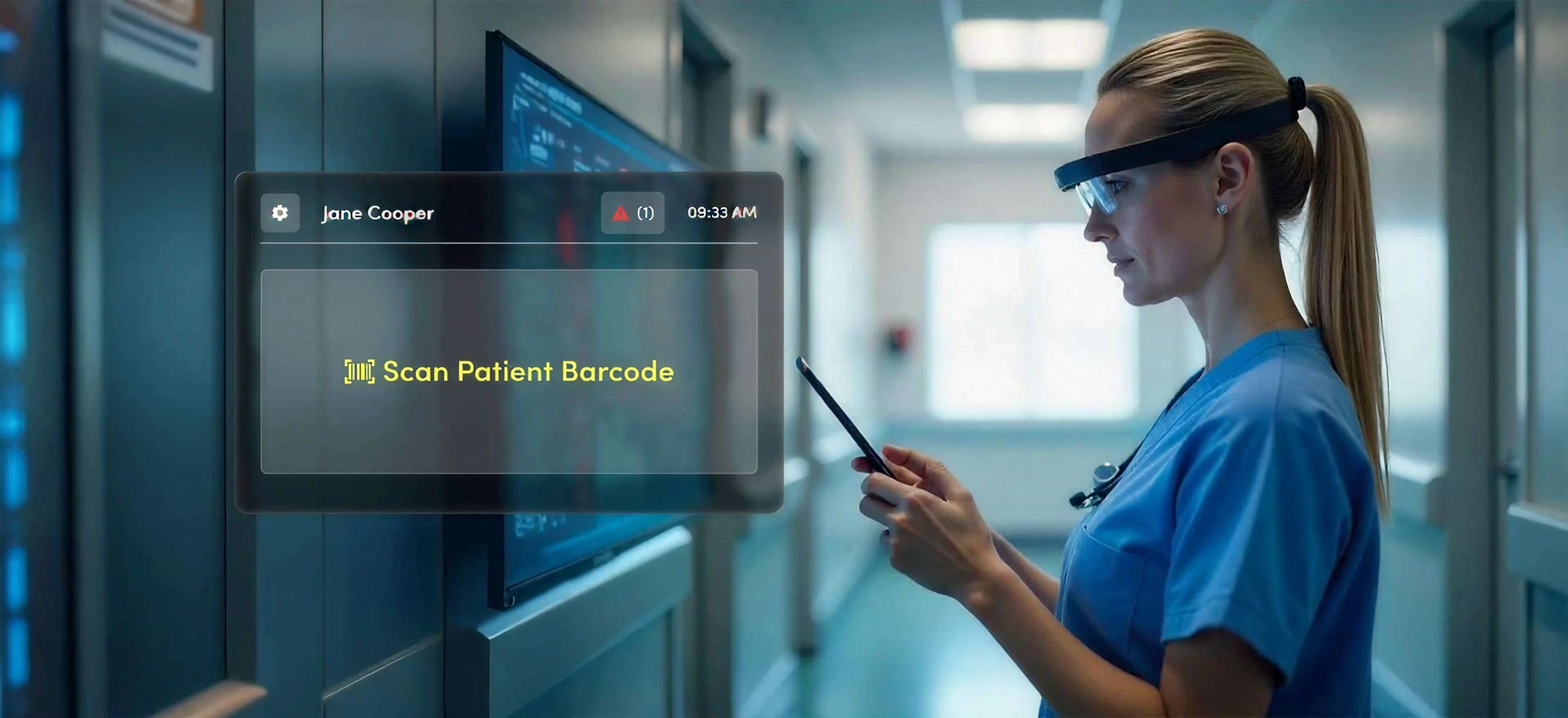

Smart glasses add a third layer. Where headsets are session-based, smart glasses are ambient. A clinician wearing AI-integrated smart glasses on a ward round can access patient data hands-free, receive AI-assisted prompts based on what the camera sees, and capture observations without breaking clinical focus.

Samsung Galaxy XR's Gemini AI integration points toward the same direction at the headset level: a spatial computing device that understands what the clinician is looking at and responds with contextually relevant information in real time. As hardware gets lighter and AI models get faster, the distinction between clinical tool and wearable assistant will narrow.

Hardware is getting lighter and better

The trend is toward thinner, longer-lasting headsets and toward smart glasses that can be worn in clinical environments all day without fatigue. The overall smart glasses category surged 247.5% in 2025, and the category is moving fast toward clinical-grade form factors. As hardware recedes into the background, the use cases that require continuous wear become practical.

Smart glasses are already in active clinical use today, not just in pilots. In 2025, a landmark study in Surgical Innovation formally validated the use of Ray-Ban Meta smart glasses in a sterile surgical workflow at Adventist Health White Memorial Hospital and USC for limb preservation surgery. It was the first peer-reviewed validation of consumer-grade smart glasses integrated into a sterile operating room. The glasses provided hands-free high-definition recording and real-time tele-mentoring via voice commands. Clinical data shows a 29% reduction in procedure times across AI-augmented smart glasses deployments, and a leading US hospital network reported a 32% reduction in surgical errors in 2026 through AI-powered glasses during complex procedures.

Beyond the operating room, smart glasses are being used for:

Hands-free documentation during ward rounds

Direct EHR capture from point of care

Telemedicine consultations where the clinician's hands need to stay free

Remote specialist guidance in emergency settings

The devices capture what the clinician sees, stream it to a remote expert, and receive voice-guided instructions in real time, without requiring the clinician to look away from the patient.

Privacy governance is an emerging challenge. Smart glasses sit at eye level and can capture audio and video in ways that are less obvious than a held device, raising patient consent and HIPAA compliance questions that many healthcare organizations have not yet addressed in their device policies. Any clinical deployment of smart glasses requires explicit consent protocols, clear data retention policies, and updated device governance frameworks before staff carry them into patient-facing environments, as healthcare privacy experts have flagged.

Reimbursement will expand

The AMA CPT code for virtual reality therapy and the FDA Medical Extended Reality program are early signals of a regulatory and reimbursement environment beginning to accommodate XR therapeutics at scale. As the evidence base grows, more applications will acquire reimbursement pathways. Organizations that build clinical validation processes into their deployments now will be better positioned to access those pathways as they open.

The convergence of XR, AI and digital twins is accelerating in surgical and hospital operations contexts. Patient-specific human digital twins derived from imaging data, rendered and manipulated in real time through MR interfaces, represent the next generation of surgical planning tools.

Conclusion

XR in healthcare is not a trend waiting to mature. For surgical training, virtual reality pain management, neurological rehabilitation, virtual reality therapy for anxiety and PTSD, and AR-guided procedures, the evidence is already strong and deployment is underway at scale in major clinical institutions globally.

The organizations getting real value from XR right now started with a specific, high-value problem rather than a broad mandate to explore immersive technology. They involved clinical staff in evaluation and selection. They treated implementation as an ongoing program rather than a one-time project. And they built on hardware platforms that can actually be maintained and scaled inside a clinical environment.

The technology will keep improving. The hardware will get lighter. The reimbursement pathways will get clearer. What does not change is the underlying requirement: the right solution for the right clinical problem, deployed with the operational rigor that healthcare demands.

Frequently Asked Questions (FAQs) about AR, VR, MR and XR in Healthcare

Q1. What is virtual reality used for in healthcare?

Virtual reality in healthcare is used for:

Surgical training and simulation

Patient therapy and rehabilitation

Pain management

Mental health treatment

Medical education

Patient education and informed consent

Drug discovery visualization

Robotic surgery training

The most clinically validated applications are VR surgical training, virtual reality pain management, virtual reality stroke rehabilitation, and virtual reality therapy for anxiety and PTSD.

Q2. Does VR actually work for pain management?

Yes. Virtual reality pain management is one of the most consistently validated applications in clinical research. Immersive VR environments reduce perceived pain by redirecting attention away from painful stimuli. Evidence supports its use in burn wound care, chemotherapy infusions, chronic lower back pain, and procedural pain in pediatric patients. The American Medical Association approved the first CPT billing code specifically for virtual reality therapy in 2022, and RelieVRx became the first FDA-authorized in-home VR treatment for chronic lower back pain and the first immersive therapeutic to receive CMS valuation.

Q3. How much does XR development cost for healthcare?

Custom healthcare XR development costs vary significantly depending on platform, regulatory requirements, and integration complexity. A standalone Meta Quest 3S training simulation for a specific clinical procedure typically ranges from $80,000 to $250,000 for an initial build, depending on content depth and interactivity. The Quest 3S at $299 and Quest 3 at $499 make hardware costs for fleet deployment more manageable than at any previous point.

Applications requiring FDA classification, EHR integration, or multi-platform deployment cost more. Off-the-shelf platforms like Touch Surgery or Transfr Health Science have subscription-based pricing that is substantially lower for standard use cases. The right comparison is total cost of ownership: development cost plus hardware, deployment, training, maintenance, and regulatory overhead over three to five years.

Q4. What VR headset is best for a hospital?

For most clinical training deployments, Meta Quest 3 ($499) or Quest 3S ($299) is the best choice: standalone operation, no PC required, widest software ecosystem, and accessible pricing makes fleet deployment practical.

For intraoperative AR guidance and hands-free clinical workflows, HoloLens 2 remains the validated enterprise standard, though it has been discontinued for new orders. Apple Vision Pro ($3,499) is the best option for precision imaging review and spatial radiology, with 75% enterprise buyer share and 50+ Fortune 100 organizations already using it. Samsung Galaxy XR ($1,799) is the first viable third platform option with deep Google AI integration for AI-assisted clinical workflows. For safety-critical sterile environments where optical see-through matters, Magic Leap 2 ($3,299) is designed specifically for that constraint.

Q5. Is VR safe for elderly patients?

VR is generally safe for elderly patients when properly screened and supervised, but contraindications are real. Cybersickness affects a higher proportion of older users due to vestibular changes and reduced sensory adaptation. Patients with certain neurological or vestibular conditions, active seizure disorders, or severe cognitive impairment should be screened before VR exposure. Short session lengths, typically 5 to 15 minutes, lower visual intensity settings, and a seated position reduce adverse event risk. Published studies in stroke rehabilitation and palliative care have reported high patient acceptance rates in elderly populations with appropriate protocols in place.

Q6. Does VR healthcare require FDA clearance?

It depends on the clinical claim. The FDA classifies certain VR and AR healthcare applications as Software as a Medical Device (SaMD). Products that make clinical claims, such as that a VR application reduces pain scores, improves diagnostic accuracy, or assists surgical navigation, require FDA clearance or approval. Training simulators, anatomy education tools, and patient education applications that do not make clinical efficacy claims generally do not require FDA clearance. Organizations building custom applications should determine the intended use and regulatory classification before beginning development.

Q7. Is VR therapy covered by insurance?

In the United States, insurance coverage for virtual reality therapy is limited but expanding. The AMA approved the first CPT code specifically for VR therapy in 2022, and CMS has begun valuing specific VR therapeutic applications including RelieVRx, now FDA-authorized for chronic lower back pain. Most VR and AR uses in clinical settings are not yet covered by standard insurance plans, which means costs are typically absorbed by the hospital, training program, or patient. Coverage is most likely for FDA-cleared products with established CPT codes and documented clinical evidence.

Q8. What is the difference between VR, AR, MR and smart glasses in healthcare?

VR (Virtual Reality) replaces the user's physical environment entirely with a digital simulation. The user sees only the virtual world. It is used for surgical training, exposure therapy, pain distraction, and patient education where full immersion is the clinical goal.

AR (Augmented Reality) keeps the real world in view and overlays digital information on top of it. A surgeon wearing AR glasses sees both the patient and a holographic layer showing vessel locations or navigation cues. AR is used for intraoperative guidance, surgical planning, and medical device visualization where the practitioner cannot be removed from the physical environment. MR (Mixed Reality) takes AR further, allowing virtual objects to interact with the physical environment in real time.

Smart glasses are a fourth category: AI-enabled, camera-equipped glasses designed for all-day wear with no full display overlay. They are used for hands-free documentation, ward round support, telemedicine, and remote specialist guidance, and are now being validated in sterile surgical environments.

Q9. Can VR be used for mental health treatment?

Yes. Virtual reality therapy for anxiety, PTSD, phobias, and social anxiety is one of the best-evidenced XR healthcare applications. VR-based exposure therapy allows patients to confront feared stimuli in a controlled, graded environment without access to the real triggering situation. A veteran with PTSD does not need to physically return to a conflict zone. A patient with a fear of heights does not need to stand on a rooftop.

The therapist controls the intensity and pacing of exposure throughout the session. Decades of RCT evidence for exposure therapy transfer directly to VR-delivered formats, and multiple meta-analyses confirm significant effect sizes for anxiety, phobia, and PTSD treatment.

Q10. How does VR help stroke rehabilitation?

Virtual reality rehabilitation for stroke recovery works by embedding repetitive motor exercises in interactive virtual environments that improve patient engagement and compliance. A 2024 systematic review of 55 RCTs covering 2,142 stroke patients found that VR conferred statistically significant benefits over conventional therapy in upper limb motor function, functional independence, quality of life, and dexterity.

A 2025 network meta-analysis in Frontiers in Neurology compared immersion levels across 16 RCTs and found that fully immersive VR produced the greatest gains in gross motor function, while non-immersive approaches were stronger for fine dexterity. The gamified nature of VR rehabilitation matters: stroke patients who find traditional repetitive exercise demotivating sustain higher engagement when the same movements are embedded in an interactive virtual environment.

Q11. What does XR integration with EHR systems look like?

XR-EHR integration typically involves pulling patient-specific data including imaging, vitals, and medication history into the XR application, and writing session data such as training completion, rehabilitation metrics, and patient interaction logs back to the EHR. Common integration points include FHIR APIs for patient data access, SMART on FHIR for authentication, and HL7 messaging for data exchange. In practice, integration complexity depends heavily on the EHR vendor: Epic and Cerner have developer programs that support external application integration, while legacy systems may require middleware. Custom healthcare XR development teams need to assess EHR integration requirements before architecture is decided.

Q12. How do you handle HIPAA compliance in a VR healthcare application?

HIPAA compliance in VR healthcare applications requires addressing data storage architecture, transmission encryption, access controls, audit logging, and patient consent workflows. Any application that collects, stores, or transmits Protected Health Information (PHI), including gaze tracking, behavioral patterns, or biometric data linked to an identifiable patient, must be built under a Business Associate Agreement (BAA) with applicable vendors and hosted on HIPAA-compliant infrastructure.

Development studios that have not previously built healthcare applications frequently underestimate this layer. It affects vendor selection, cloud storage choices, session recording policies, and how data is anonymized for analytics.

Q13. What is a digital twin in healthcare?

A digital twin in healthcare is a persistent, data-connected virtual model of a patient, organ, facility, or clinical system that updates in real time as its physical counterpart changes. Patient-specific digital twins derived from CT and MRI data allow surgeons to rehearse a procedure on an accurate 3D model of that specific patient's anatomy before the first incision. Hospital digital twins allow administrators to simulate patient flow and emergency scenarios. In clinical trials, digital twins of patients enable continuous physiological simulation to support drug dosage decisions and early detection of deterioration.

Q14. How long does it take to build a custom VR healthcare application?

A focused custom VR training simulation for a specific clinical procedure on a single platform such as Meta Quest typically takes 3 to 6 months from scoping to launch. More complex applications, such as multi-platform builds with EHR integration, clinical validation requirements, or FDA classification, typically take 9 to 18 months. The regulatory classification of the intended use is the single biggest variable: an FDA-cleared SaMD application requires clinical validation and regulatory submission that adds significant time and cost over a training or education tool that does not make clinical claims.

Q15. Which industries use XR in healthcare most?

The heaviest XR adoption in healthcare comes from four segments:

Medical device companies using AR for device visualization and surgical training

Hospital systems and academic medical centers using VR surgical training and simulation

Pharmaceutical and life sciences companies using VR for drug discovery and molecular visualization, and AR for manufacturing quality control

Rehabilitation and mental health providers using VR therapy

Medical device companies were early movers because device-specific training was an immediate commercial use case with clear ROI. Hospital systems followed as standalone headset costs dropped and software libraries expanded. Pharma adoption spans molecular visualization, clinical trial simulation, and AR-assisted manufacturing compliance.

Q16. What are the biggest challenges to adopting XR in healthcare?

The most common challenges are:

Headset fleet management and hygiene overhead in clinical environments

Lack of clinical staff buy-in when technology is selected without their involvement

Poor integration with existing EHR and clinical IT systems

Inadequate cost modeling that ignores total cost of ownership including maintenance, replacement accessories, and staff time

Cybersickness and contraindication screening gaps in patient-facing applications

Absence of reimbursement pathways for most XR applications outside a narrow set of FDA-cleared products

Underestimating clinical accuracy requirements for content, particularly medical animations and device visualizations that require subject matter expert collaboration throughout development

For smart glasses specifically, privacy governance gaps around patient consent, audio and video capture, and HIPAA compliance that most healthcare device policies have not yet addressed